If you’re evaluating a TTS model and you only trust numbers, you’re effectively testing the audio while shipping the experience. Metrics are useful for scale and regression, but they miss the failures that actually decide whether real users accept a voice. Human listening is the only method that consistently catches the “this doesn’t feel right” problems: prosody that changes meaning, tone that breaks trust, number-reading that adds cognitive friction, long-form drift that makes people drop off, and the subtle awkwardness that shows up only in real scenarios.

That’s the center of this topic: not whether a model sounds “good,” but whether it sounds right in the context your users live in.

For organizations building production-grade voice systems, this distinction becomes critical. At FutureBeeAI, evaluation is treated as part of the product lifecycle, not as a final checkbox before release.

People don’t listen to synthetic speech the way they inspect an image for resolution. They listen the way they listen to another person. They infer mood, intent, confidence, empathy, urgency, even competence. Two voices can say the same sentence, with identical words, and users will trust one while rejecting the other.

This is why teams get blindsided. They evaluate as if TTS is mostly an engineering artifact, then deploy it into a social channel. In production, users don’t grade your model. They simply react. They pause. They repeat themselves. They abandon. They complain that it feels robotic, weird, or “not for me” even though they can’t explain why.

This mismatch shows up across use cases, but the pattern is consistent.

In voice bots, a single mis-timed pause can make an agent sound uncertain or passive-aggressive. In narration and education, a model can be perfectly intelligible and still cause drop-offs because it’s fatiguing. In dubbing and localization, pronunciation may be correct, but the voice may fail to match cultural expectations of emotion and pacing, so it feels like a translation that never became native.

You can’t fix what you can’t see, and you can’t see these issues through metrics alone.

If your system relies on structured recordings for synthesis training, high-fidelity TTS speech datasets must be evaluated not just for acoustic quality, but for experiential alignment.

What “not working well with real users” usually means, in concrete terms

When prospects tell you their TTS isn’t working well with real users, it almost never means “we can’t understand it.” It means the system is leaking friction in ways that add up.

Sometimes its meaning drifts through emphasis. The model stresses the wrong word and the sentence subtly changes intent. A simple line like “You can cancel your subscription today” can land you defensive or annoyed if the stress hits “can” too sharply. Users don’t articulate “prosodic focus error,” they just feel the bot is being rude.

Sometimes it’s tone-intent mismatch. A voice that’s bright and upbeat works for onboarding. The same voice delivering a cancellation confirmation can feel insensitive. A calm voice works in healthcare education. The same calmness applied to an urgent fraud alert can feel careless. This is not “emotion” as an academic concept. It is pragmatic alignment: does the voice match the moment?

Sometimes it’s cognitive friction, especially with numbers. A model can read “₹1,25,000” accurately but in a pattern that forces the listener to mentally re-parse it. Or it reads “12/05/2026” in a format that doesn’t match how your users speak dates, so they hesitate. The words are technically right, the experience is not. Research in auditory processing and Cognitive Load Theory explains why even technically correct speech can become mentally effortful.

Sometimes it’s long-form fatigue. This is one of the most overlooked production killers. A voice can sound great for ten seconds and become tiring over two minutes because it lacks natural variation in rhythm and emphasis. People stop listening not because it’s bad, but because it’s effortful.

Sometimes it’s local awkwardness. A brand name, a person’s name, a street name, a code-mixed phrase, an acronym that should be spoken as a word instead of spelled out. These are small errors with high emotional weight because they signal whether the system “belongs” in the user’s world.

These are the problems that make real users reject voices. They’re also the problems most evaluation pipelines barely touch.

In regulated environments such as BFSI and Healthcare AI, tone and clarity directly affect trust and compliance workflows. When working with conversational automation, structured call center speech datasets help replicate real-world stress points during evaluation.

Why the usual evaluation setups lie, even when they are “industry standard”

A lot of teams run MOS, run a few objective checks, listen to a handful of samples, and call it evaluation. The issue isn’t that these steps are wrong. The issue is that they’re easy to do in a way that produces misleading confidence.

Mean Opinion Score itself is standardized under frameworks like ITU-T Recommendation P.800, which defines methodologies for subjective speech quality assessment. But even when using industry-recognized standards, execution matters.

One common trap is prompt cleanliness. Evaluation prompts are often too tidy: short, grammatical, neutral, with predictable vocabulary. That’s not how users speak, and it’s not even how product scripts are read in real flows. Real scripts are full of edge cases: addresses, names, SKUs, abbreviations, disclaimers, error messages, and awkward concatenations from templated systems.

Another trap is demo bias, even inside your own team. People unconsciously pick samples that sound good. A vendor demo is rarely “fake,” but it is curated. It avoids the places where voices break: long sentences, dense numbers, code-mixed segments, or emotionally delicate moments.

A third trap is listener mismatch. Teams evaluate with listeners who don’t represent the end user’s ear. Accent familiarity and cultural expectations matter. For multilingual deployments, scalable contributor sourcing through Crowd-as-a-Service ensures listening panels reflect real user ears. Domain context matters. A voice that sounds “fine” to a generic listener can feel odd to the real audience because pacing, intonation patterns, and emphasis norms differ.

And then there’s score worship. MOS is useful, but it’s also easy to misuse. If MOS becomes the headline KPI, teams start optimizing for what increases MOS, which is often smoothness and artifact reduction. That can accidentally reduce expressiveness, flatten variation, or push voices into a safe but lifeless middle. You get a voice that is “high-quality audio” but low-impact communication.

This is how teams end up with a model that looks validated but fails with real users.

The deeper reason human listening still matters: speech is interpreted, not measured

When people listen, they infer intent and evaluate safety.

Research in speech perception and prosody demonstrates that intonation patterns alter perceived meaning even when lexical content is identical. Studies published in journals like the Journal of the International Phonetic Association show how stress placement shifts interpretation.

Metrics do not capture interpretive layers. Humans do.

This is particularly relevant in sectors like Telecom AI and Retail & Ecommerce, where tone directly affects user trust and purchase behavior.

That’s why micro-details matter.

A 200-millisecond pause before a key word can sound like hesitation. Slightly rising intonation at the end of a declarative sentence can sound unsure. Even pacing can sound synthetic. Overly crisp consonants can sound harsh or “announcer-like.” A voice that is too emotionally neutral in a human moment can feel cold, even if the words are polite.

This is also why “perfect pronunciation” can still feel wrong. Humans don’t just want correctness. They want social naturalness. The way a real speaker compresses words, de-emphasizes predictable information, and emphasizes what matters in context is part of meaning. The model can be correct and still be socially clumsy.

Metrics don’t capture that interpretive layer. Human listeners do.

A practical listening taxonomy: what expert evaluators listen for

If you want listening to be more than vibes, you need a clear mental model of what to listen for. Experienced evaluators tend to bucket issues into a few categories, and this taxonomy is useful because it turns “I don’t like it” into “we can fix it.”

Acoustic artifacts are the obvious ones: clicks, metallic timbre, clipping, noise bursts, robotic buzzing, breath artifacts, unnatural sibilance. These are often caught by both metrics and humans, but humans are better at judging severity because some artifacts are technically present but perceptually irrelevant, and others are subtle but deeply annoying.

Linguistic fidelity is about the words: mispronunciations, wrong word boundaries, messed-up acronyms, wrong handling of abbreviations, odd grapheme-to-phoneme outputs for names and brands. This is where your real-world vocabulary lives.

Prosodic integrity is where most production failures hide. Stress placement, rhythm, phrasing, pause placement, and the way the model groups meaning. It’s possible for every word to be correct and for prosody to still change meaning.

Emotional congruence is not about making the voice dramatic. It’s about aligning tone with intent. Does the voice sound reassuring when it should? Firm when it should? Neutral when it should? Does it accidentally sound sarcastic, overly cheerful, or detached?

Cognitive smoothness is about how easy it is to follow. Numbers, dates, addresses, legal disclaimers, and instructions are where cognitive load spikes. A voice can be correct but cognitively unfriendly.

Conversational dynamics matter for voice bots: turn-taking feel, how it handles short confirmations, whether it sounds impatient when users hesitate, whether it sounds unnatural in quick back-and-forth.

Cultural authenticity matters for localization and multilingual TTS: not just accent, but the “shape” of speech patterns, the placement of emphasis, and the naturalness of code-mixed segments.

Fatigue resistance matters for narration and education: does the voice remain engaging without becoming theatrical? Does it hold attention over minutes?

A good listening framework makes these buckets explicit, because your team needs a shared language for what “bad” means.

Structured validation often includes professional audio annotation workflows to systematically log perceptual issues rather than relying on subjective memory.

The shift that makes evaluation real: scenario packs, not generic test sets

If you take one thing from this blog, take this: your evaluation should be built around scenario packs that mirror your product, not around generic test sets that mirror your dataset.

A scenario pack is not huge. It is intentionally sharp.

For voice assistants, realistic wake word and command datasets expose edge cases missed by lab prompts.

For automotive deployments, environmental variability requires evaluation against in-car speech datasets recorded across real noise conditions.

For a voice bot, you build packs around moments that define trust: onboarding, authentication, error handling, refunds, cancellations, escalation, and reassurance. You include realistic phrasing, not perfect sentences. You include interruptions and confirmations. You include the “annoyed user” flow, because that’s where tone mismatches hurt the most.

For narration and education, you build packs around paragraph-level content: a two-minute explanation, a list-heavy segment, a technical walkthrough, a story-like section with emotion shifts. You include long sentences and punctuation-heavy lines, because that’s where prosody and breath patterns reveal themselves.

For dubbing and localization, you build packs around expressive fidelity: conversational lines, emotional reactions, sarcasm risk, cultural idioms, and code-mix. You include names, titles, and brand-like terms because real content has them.

A scenario pack is essentially your product’s “truth serum.” It’s the fastest way to expose whether a voice works where it actually needs to work.

How to run human listening so it produces actionable signal, not debate

Human listening becomes messy when it’s informal. People argue. Feedback becomes personal. You get “I like it” versus “I don’t.” That’s useless.

The fix is to treat listening like an engineering discipline with a human instrument.

Start by defining the decision you’re making. Are you choosing between two model versions? Are you approving a release? Are you validating a fine-tune? Listening should have a purpose that ends in an action.

Then run blind comparisons whenever possible. Most teams underestimate how much bias enters when listeners know which sample is the “new model.” Blind A/B doesn’t need to be complicated. It just needs to be consistent.

Use a small but stable panel instead of a huge crowd that changes every time. Stability matters because your goal is often regression detection and consistent taste, not a one-time academic measurement. For multilingual products, make sure the panel matches your real audience ear, especially for accent familiarity.

Most importantly, use a rubric that captures failure modes. Not “rate 1-5.” Ask for structured observations:

Was any word hard to process?

Did any sentence feel emotionally wrong for its intent?

Did any pause feel awkward or change meaning?

Did anything feel tiring over time?

Did anything feel culturally out of place?

When you collect feedback this way, you can aggregate and act. You can say, “We have a recurring issue with pause placement around named entities,” not “Some people didn’t like it.”

This is how listening becomes repeatable.

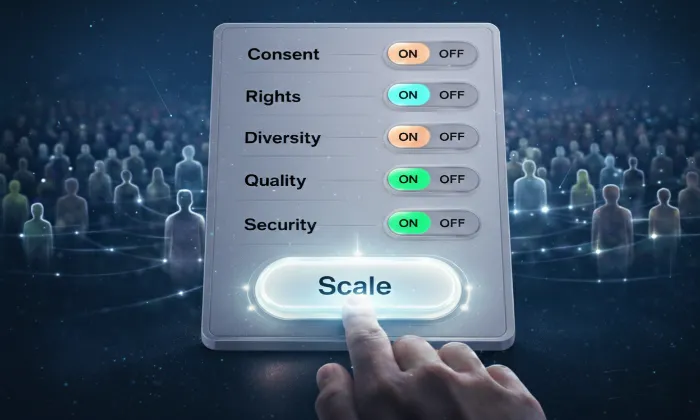

Operationalizing this at scale often requires workflow governance platforms like Yugo-AI Data Platform, which manage contributors, consent, metadata, and quality controls.

The hybrid evaluation stack: what metrics are for, and what humans are for

The smartest teams don’t treat this as humans versus metrics. They treat it as division of labor.

Metrics are excellent for scale and surveillance. They can run across thousands of prompts every build. They can detect that something shifted. They can flag regressions in loudness, clipping, timing distributions, or pronunciation checks for known terms. They are your early warning system.

Humans are excellent for risk and truth. They tell you whether the voice is socially aligned, whether it feels right in context, whether it carries trust, whether it creates friction. They catch the failures that cause real user rejection even when metrics look stable.

Governance frameworks often align evaluation with documented compliance standards found under Policies & Compliance, especially in regulated industries.

A practical setup looks like this in real teams:

You run automated checks continuously on a broad set. You use those checks to avoid shipping obvious regressions and to track stability over time. Then, before any release candidate goes live, you run the scenario pack through structured human listening and treat it as a gate.

This doesn’t slow you down. It prevents you from paying the cost later in churn and rework.

What most teams don’t expect: the “too good” problem

Here’s an unexpected but important nuance: sometimes making TTS “more human” can make evaluation harder and failure more dangerous.

When a voice is clearly synthetic, users forgive it. When a voice is close to a human, users raise their standards. Small unnatural moments become more noticeable because they break the illusion. Worse, users interpret them socially. A tiny prosody glitch can sound like sarcasm, boredom, or annoyance. The model didn’t intend any of that, but the user’s brain doesn’t care. It interprets anyway.

In high-trust sectors like Healthcare and Financial Services, tone perception directly impacts confidence and adoption.

This is why human listening becomes more important as models improve. The failures become subtler, and the social stakes become higher.

If you’re shipping voices into high-trust contexts, this matters a lot. Your model doesn’t need to be maximally human. It needs to be predictably appropriate.

Release governance: evaluation isn’t just testing, it’s decision-making

In mature TTS deployments, evaluation is tied to governance. Not corporate bureaucracy, but a clear rule: what blocks a release?

Without release rules, teams ship because “it sounded okay to a few people.” That’s how production issues slip through.

For teams wanting to see structured deployment approaches in action, review real-world implementations in Case Studies.

Evaluation must tie to release governance, not informal preference.

A simple governance approach is to define “non-negotiables” for your product. For example:

If any scenario pack sample contains a meaning-changing prosody error, block the release.

If number reading creates repeated cognitive friction in key flows, block the release.

If long-form narration causes fatigue in the two-minute pack, block the release.

If tone mismatch appears in sensitive scenarios, block the release.

These don’t need to be complicated. They just need to exist. They force the team to treat user perception as a real acceptance criterion, not a soft preference.

Where training data becomes the real lever, and evaluation tells you what data you actually need

A lot of TTS teams think of evaluation as a final step. In reality, evaluation is the most efficient way to discover what your training data is missing.

If your scenario pack repeatedly fails on named entities, you likely need better coverage and better normalization strategies. If your voice struggles with numbers, it’s often not just a model issue; it’s training distribution and formatting diversity. If long-form drift appears, you probably under-trained on paragraph-level recordings or you trained on content with limited expressive variation.

Even emotional congruence issues often trace back to data. Not “add emotions,” but include realistic speaking styles that match the contexts you want to serve: calm explanation, quick confirmation, gentle correction, confident instruction, empathetic reassurance.

This is where experienced teams quietly outperform others. They don’t just tune models. They tune data. They use evaluation as the radar that guides what data to collect next, what to

rebalance, and what to stress-test.

Specialized TTS speech data collection becomes the lever, not just model fine-tuning.

A short checklist that actually matters in practice

Most checklists are useless because they’re generic. This one is intentionally focused on what breaks in real deployments.

If you’re evaluating a TTS model for production, you want to be able to answer these:

Does the voice stay consistent and non-fatiguing beyond the first 30-60 seconds?

Does it handle names, brands, acronyms, and mixed-language fragments without awkwardness?

Do numbers, dates, currency, and addresses feel easy to follow, not merely correct?

Does tone match intent across your highest-risk scenarios, especially negative or sensitive moments?

Does emphasis land correctly in sentences where emphasis changes meaning?

Does the voice behave well in your product’s real rhythm: short confirmations, interruptions, and quick turns?

Do your evaluation listeners represent the ear of your users, not just your internal team?

Do you have release rules that treat perceptual failures as blockers?

If you can answer “yes” consistently, you’re not just evaluating TTS. You’re managing a voice product.

Why human listening still matters, even in a world of better models

Human listening matters because the most damaging TTS failures are not acoustic. They’re experiential. They affect trust, comfort, and comprehension in ways that numbers do not reliably predict.

It also matters because your users are not listening for correctness. They are listening for alignment. They want the voice to feel appropriate for the moment, for the product, for their language habits, for their culture, and for their tolerance levels.

A strong evaluation approach doesn’t worship subjectivity, and it doesn’t dismiss it either. It respects the reality that synthetic speech is a human-facing interface. Metrics keep you honest at scale. Listening keeps you honest in truth.

And the teams that treat listening as a core part of evaluation don’t just ship voices. They ship experiences people accept.

Explore more insights on evaluation frameworks and AI data strategy on the FutureBeeAI Blog.

A practical next step if you’re improving your TTS evaluation

If you’re serious about improving TTS evaluation, the fastest win is to build a scenario-based listening pack for your product and run it as a consistent release gate. Once you do that, you’ll immediately see what your current metrics were hiding.

If you want, we can share a ready-to-use template for a scenario-based evaluation pack that covers voice bot, narration, and localization flows, along with a structured listening rubric that turns feedback into engineering signals. We can also help you translate what you find into training data requirements, whether you’re fine-tuning an existing voice or building multilingual capabilities from scratch.

No hard sell. Just the tools and thinking that make TTS evaluation real.

If you are looking to benchmark or elevate your TTS evaluation process with experienced listening panels, scenario-driven assessment frameworks, and multilingual expertise grounded in real deployment contexts, it helps to work with specialists like FutureBeeAI, who treat evaluation as a discipline rather than a formality.