What happens when model evaluation is done only to satisfy benchmarks?

Model Evaluation

Data Science

AI Models

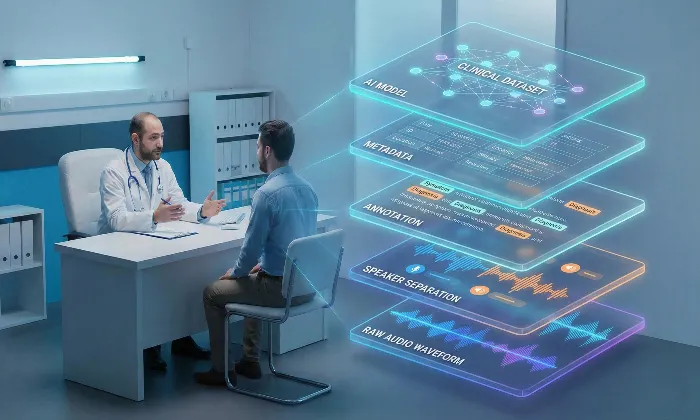

In the world of AI model evaluation, focusing solely on benchmarks might appear to be a reliable way to measure success. However, this narrow focus can create an illusion of progress while deeper issues remain hidden. Models may achieve impressive scores in controlled evaluations yet fail when exposed to real-world conditions.

This situation is similar to a student who performs well on practice tests but struggles in an actual exam. Benchmark performance may look strong, but it does not necessarily reflect real-world readiness.

Unpacking the Risks

When evaluation is centered only on benchmark scores, teams can develop a false sense of confidence. Improved metrics may signal progress, yet they often fail to capture user-facing weaknesses.

For example, a text-to-speech dataset model might achieve strong Mean Opinion Scores (MOS) during controlled testing. While the speech may sound clear and intelligible in a laboratory setting, the same model could struggle with unfamiliar accents, emotional tones, or domain-specific vocabulary outside the lab.

The Pitfalls of Benchmark-Driven Evaluation

Superficial success: Metrics such as MOS can highlight improvements under ideal conditions, but they may hide problems that appear in realistic scenarios. A model might perform well in clean audio environments but fail when background noise, diverse accents, or longer conversations are introduced.

Overfitting to evaluation datasets: When teams repeatedly optimize models for a fixed test set, performance may improve on that dataset while generalization declines. For example, a TTS model trained extensively on a specific speech dataset might struggle when exposed to new speaking styles or vocabulary patterns.

Neglecting user experience: Benchmarks measure technical performance, but users evaluate systems based on experience. A model may meet benchmark thresholds while still sounding unnatural or emotionally flat. In speech systems, subtle issues such as rhythm, tone, or conversational flow strongly influence user perception.

Strategies for Meaningful Model Assessment

Moving beyond benchmark-focused evaluation requires a broader approach that reflects how users actually experience AI systems.

Adopt user-centered evaluation metrics: Include attributes such as naturalness, emotional appropriateness, pronunciation accuracy, and perceived intelligibility. These factors directly influence how users judge speech systems.

Diversify evaluation methodologies: Combining multiple methods such as A/B testing, paired comparisons, and attribute-based rubrics provides deeper insights than relying on a single benchmark score.

Implement continuous evaluation: Model evaluation should not stop after deployment. Continuous monitoring and repeated human evaluation help detect silent regressions and performance drift that may develop over time.

Organizations such as FutureBeeAI incorporate these broader evaluation frameworks to ensure models are assessed not only through metrics but also through real user perception.

Practical Takeaway

High benchmark scores do not guarantee real-world success. Evaluation processes should focus on whether models perform reliably in practical situations and deliver meaningful user experiences.

When evaluation frameworks combine technical metrics with human-centered assessments, teams gain a clearer understanding of model performance and risk.

By prioritizing real-world outcomes over benchmark optimization, organizations can build AI systems that perform reliably beyond the laboratory environment.

If you want to explore how structured evaluation frameworks can strengthen your AI systems, you can learn more or reach out through the FutureBeeAI contact page.

FAQs

Q. What should teams focus on instead of benchmarks?

A. Teams should emphasize user-centered metrics such as naturalness, emotional appropriateness, pronunciation accuracy, and perceived intelligibility to ensure the system performs well in real-world interactions.

Q. How can organizations ensure their models remain effective over time?

A. Organizations should implement continuous evaluation processes that include periodic human assessments, updated test sets, and monitoring systems designed to detect regressions or performance drift.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!