How do you decide which TTS outputs should be evaluated by humans?

TTS

Quality Assurance

Speech AI

Launching a Text-to-Speech (TTS) system that performs well in testing but fails in real-world environments often happens when evaluation relies too heavily on automated metrics. While automated checks are useful for detecting obvious technical issues, they cannot fully capture how speech is perceived by real listeners. Human evaluation plays a crucial role in identifying subtle issues such as unnatural rhythm, incorrect emphasis, or mismatched emotional tone.

Selecting the right outputs for human evaluation ensures that TTS systems are assessed where human perception matters most.

Why Human Evaluation Is Necessary in TTS

Automated metrics can measure pronunciation accuracy, timing, or error rates, but they often miss perceptual elements that influence user experience. Speech that is technically correct may still feel robotic, emotionally flat, or unnatural.

Human evaluators can identify these subtle problems because they naturally interpret tone, pacing, and contextual meaning. Their feedback helps ensure that speech systems meet user expectations beyond technical performance.

Criteria for Selecting TTS Outputs for Human Evaluation

Contextually important scenarios: Prioritize outputs that reflect how the system will actually be used. For example, if a TTS system supports customer service interactions, evaluation should include conversational responses and typical dialogue scenarios.

Outputs with variable perceived quality: Speech samples that show inconsistent tone, pacing, or prosody should be prioritized for human review. These samples often reveal issues that automated metrics cannot detect.

High-risk or sensitive applications: Systems used in domains such as healthcare, legal services, or emergency communication require additional scrutiny. Even small pronunciation or tone errors can lead to misunderstandings.

User-reported problem areas: Real-world user feedback provides valuable signals about where models struggle. Outputs associated with repeated user complaints should be reviewed through human evaluation.

Performance anomalies or regressions: Sudden changes in evaluation metrics or user engagement may signal hidden issues. Human evaluators can detect whether these changes reflect genuine quality problems.

Practical Methods for Implementing Human Evaluation

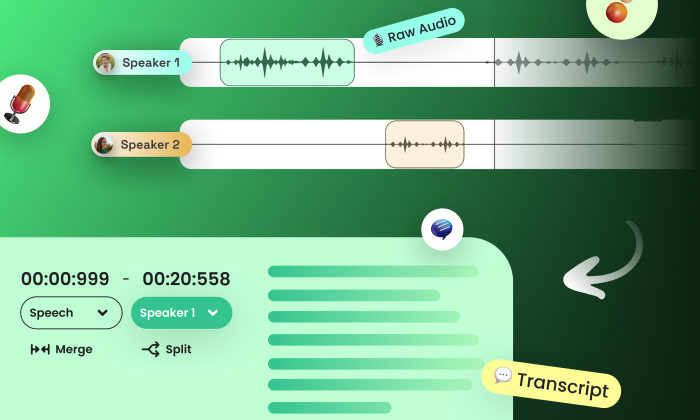

Structured evaluation rubrics: Provide clear evaluation guidelines focusing on attributes such as naturalness, prosody, pronunciation accuracy, and emotional appropriateness.

Evaluation platforms with traceability: Platforms like FutureBeeAI provide structured evaluation workflows with logging and traceability, ensuring that results remain transparent and auditable.

Continuous evaluation cycles: Human evaluation should be repeated regularly rather than conducted only once before deployment. Continuous monitoring helps detect performance drift and silent regressions.

Practical Takeaway

Choosing which outputs to evaluate with human listeners requires a strategic approach. By focusing on contextually important samples, outputs with uncertain quality, high-risk applications, user feedback signals, and unexpected performance changes, teams can allocate human evaluation resources effectively.

Combining automated metrics with targeted human listening evaluations helps ensure that Text-to-Speech systems perform reliably in real-world scenarios.

Organizations seeking structured evaluation frameworks can explore how FutureBeeAI supports scalable human evaluation workflows for speech systems.

FAQs

Q. Why can’t automated metrics fully evaluate TTS quality?

A. Automated metrics measure technical properties such as pronunciation accuracy but cannot fully assess perceptual attributes like naturalness, emotional tone, and conversational flow.

Q. When should human evaluation be prioritized?

A. Human evaluation should focus on outputs that are contextually important, show inconsistent quality, relate to sensitive applications, reflect user complaints, or show signs of performance regression.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!