How do you document human evaluation for audits?

Human Evaluation

Audits

Compliance

In the realm of AI, meticulous documentation of human evaluations is essential for ensuring auditability, transparency, and long-term reliability. This is especially critical in systems like text-to-speech (TTS) models, where human judgment directly influences evaluation outcomes. Without structured documentation, it becomes difficult to trace decisions, validate results, or improve evaluation quality over time.

Why Documenting Human Evaluations Matters

Documenting human evaluations goes beyond recording scores. It captures the full context behind decisions, enabling teams to understand not just what was evaluated, but how and why conclusions were reached.

Traceability: Provides a clear record of who evaluated what, when, and under which conditions.

Regulatory Compliance: Ensures readiness for audits by maintaining structured and verifiable records.

Continuous Improvement: Enables analysis of patterns, inconsistencies, and evaluator behavior to refine processes over time.

Key Components of Effective Evaluation Documentation

Evaluator Identification: Maintain records of evaluator profiles, including qualifications, training status, and performance history. This adds context and credibility to evaluation outputs.

Evaluation Context: Document the conditions under which evaluation occurs, including model version, dataset used, task type, and environmental variables. This ensures reproducibility and clarity.

Evaluation Methodology: Clearly define the methods used, such as MOS, A/B testing, or structured evaluation frameworks. Include the rationale behind method selection to align with evaluation objectives.

Criteria and Attributes: Specify the attributes being evaluated, such as naturalness, intelligibility, prosody, or emotional tone. Consistent criteria ensure comparability across evaluations.

Feedback and Results: Capture both quantitative scores and qualitative feedback. Written insights often reveal issues that numerical scores cannot fully explain.

Disagreement Analysis: Document cases where evaluators disagree. These instances can highlight ambiguity in criteria, evaluator bias, or complex perceptual issues that require deeper investigation.

Practical Implementation Approach

Effective documentation requires systems that automatically capture and organize evaluation data. Metadata logging, version tracking, and structured storage ensure that all evaluation activities are recorded without manual gaps.

At FutureBeeAI, evaluation workflows are designed to log evaluator actions, decisions, and contextual data in real time. This ensures that documentation is complete, accessible, and audit-ready while also supporting continuous refinement of evaluation processes.

Practical Takeaway

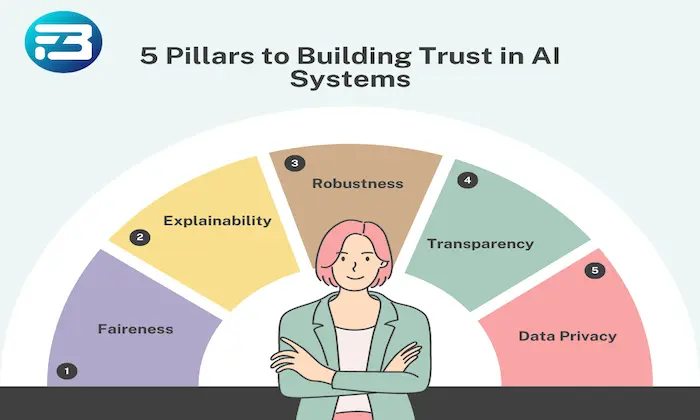

Human evaluation documentation is a foundational requirement for building trustworthy AI systems. It enables traceability, supports compliance, and provides the insights needed to improve evaluation quality over time.

By standardizing documentation practices and integrating them into evaluation workflows, teams can ensure that their systems remain transparent, auditable, and aligned with real-world expectations. If you are looking to strengthen your evaluation documentation strategy, you can explore tailored solutions through the contact page.

FAQs

Q. What should be done when evaluators disagree on results?

A. Disagreements should be documented and analyzed, as they often reveal gaps in evaluation criteria, ambiguity in instructions, or perceptual differences. Addressing these helps improve evaluation consistency and clarity.

Q. How can data integrity be ensured in evaluation documentation?

A. Data integrity can be maintained through controlled access, audit trails, version tracking, and regular reviews of documentation processes. These measures ensure that records remain accurate, secure, and compliant.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!