Have you ever stopped to think about how your voice magically turns into written words or how your smartphone recognizes your unique vocal identity? It's mind-boggling, right?

Imagine this: you're sitting in a room, jotting down notes for an important presentation. Instead of tediously typing every word, wouldn't it be incredible if you could simply speak your thoughts and watch as they appear on the screen before your eyes? That's the power of speech recognition! It's like having your own personal stenographer, effortlessly transcribing your spoken words into written text.

But hold on, that's not all! Have you ever seen those spy movies where a secret agent's voice unlocks a high-tech vault? Well, that's voice recognition in action! It's like having a superpower that allows you to open doors, access your digital devices, and even perform secure transactions, all with the sound of your voice.

Now, you might be wondering, what's the difference between speech recognition and voice recognition? Aren't they the same thing? Ah, my curious friend, Not quite! While these terms are often used interchangeably, they actually refer to distinct technologies with their own unique abilities.

In this captivating article, we'll unravel the secrets behind speech recognition and voice recognition, exploring their real-life applications, benefits, and most importantly, the intriguing differences between them.

Understanding Speech Recognition

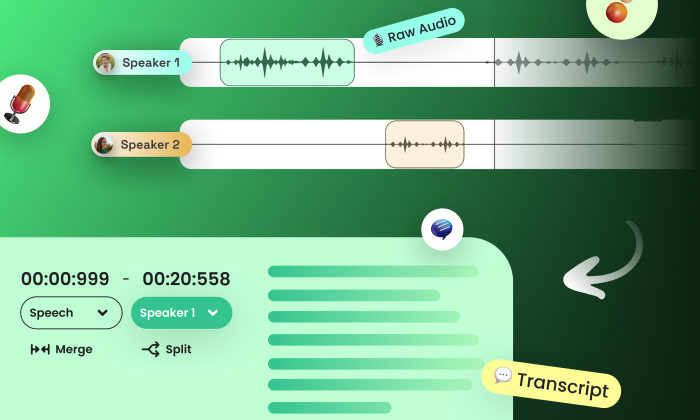

Speech recognition, also known as automatic speech recognition (ASR), is a technological marvel that enables computers to convert spoken language into written text. It involves the process of analyzing audio input, extracting the spoken words, and transforming them into written form. Speech recognition systems utilize sophisticated algorithms and language models to achieve accurate transcription.

Speech recognition, also known as automatic speech recognition (ASR), is a technological marvel that enables computers to convert spoken language into written text. It involves the process of analyzing audio input, extracting the spoken words, and transforming them into written form. Speech recognition systems utilize sophisticated algorithms and language models to achieve accurate transcription.

How does Speech Recognition Work?

The workings of speech recognition are quite fascinating. Let's take a closer look at the underlying process:

Audio Input: The speech recognition system receives audio input, typically through a microphone or other audio devices.

Pre-processing: The audio input undergoes pre-processing to eliminate background noise, enhance clarity, and normalize the audio signal.

Acoustic Modeling: The system employs acoustic modeling techniques to analyze and interpret the audio input. This involves breaking down the speech into smaller units known as phonemes and mapping them to corresponding linguistic representations.

Language Modeling:Language models play a crucial role in speech recognition by utilizing statistical patterns and grammar rules to predict and correct potential errors in transcription. They enhance the accuracy and contextuality of the converted text.

Decoding: Using a process called decoding, the system matches the audio input against its extensive database of acoustic and language models to determine the most likely transcription.

Text Output: Finally, the speech recognition system generates the written output, providing an accurate representation of the spoken words.

How is The Speech Recognition Model Trained?

There are different types of speech datasets used to train a speech recognition model, which typically consist of paired audio and text samples. This means that for each audio segment, there is a corresponding transcription of the spoken words. The dataset needs to be diverse and representative of real-world speech patterns, encompassing different speakers, accents, languages, and recording conditions. Here's an overview of the training process for a speech recognition model using such a dataset:

Data Collection: Large amounts of audio data are collected from various sources, such as recorded speeches, interviews, lectures, custom collections, or publicly available datasets. The dataset should cover a wide range of topics and speakers to ensure generalization.

Data Preprocessing: The collected audio data undergoes preprocessing steps to enhance its quality and normalize the audio signals. This may involve removing background noise, equalizing volume levels, and applying filters to improve clarity.

Transcription: Trained transcribers listen to the audio samples and manually transcribe the spoken words into written text. The transcriptions are carefully aligned with the corresponding audio segments to create the paired audio-text dataset.

Speech recognition models typically require large amounts of training data. For instance, OpenAI's Whisper ASR system was trained on 680,000 hours of multilingual and multitask supervised data, making it one of the largest speech datasets ever created.

Dataset Split:The dataset is typically divided into three subsets: training, validation, and testing. The training subset, which is the largest, is used to train the model. The validation subset is used during training to monitor the model's performance and adjust hyperparameters. The testing subset is used to evaluate the final model's performance.

Feature Extraction: From the audio samples, various acoustic features are extracted. These features capture important characteristics of the audio, such as frequency content, duration, and intensity. Common features include Mel-frequency cepstral coefficients (MFCCs), spectrograms, and pitch information.

Language Modeling: Language models are trained on large textual datasets to learn statistical patterns, grammar rules, and linguistic contexts. These models provide additional contextual information during the training of the speech recognition model, improving its accuracy and contextuality.

Training the Model:The speech recognition model is trained using the paired audio-text dataset and the extracted acoustic features. The model learns to associate the acoustic patterns with the corresponding textual representations. This involves using algorithms such as deep neural networks, recurrent neural networks (RNNs), or transformer-based models, which are trained using gradient-based optimization techniques.

Iterative Training: The model is trained iteratively, where batches of data are fed to the model, and the model's parameters are adjusted based on the prediction errors. The training process aims to minimize the difference between the predicted transcriptions and the ground truth transcriptions in the dataset.

Hyperparameter Tuning: During training, hyperparameters (parameters that control the learning process) are adjusted to optimize the model's performance. This includes parameters related to network architecture, learning rate, regularization techniques, and optimization algorithms.

Validation and Testing:Throughout the training process, the model's performance is evaluated on the validation subset to monitor its progress and prevent overfitting. Once training is complete, the final model is evaluated on the testing subset to assess its accuracy, word error rate, and other relevant metrics.

Fine-tuning and Optimization: After the initial training, the model can undergo further fine-tuning and optimization to improve its performance. This may involve incorporating additional training data, adjusting model architecture, or using advanced optimization techniques.

By training on a diverse and extensive dataset of paired audio and text samples, speech recognition models can learn to accurately transcribe spoken words, enabling applications such as transcription services, virtual assistants, and more. The training process involves leveraging the power of machine learning algorithms and optimizing model parameters to achieve high accuracy and robustness in recognizing and transcribing speech.

Applications of Speech Recognition

Speech recognition technology has revolutionized numerous industries, transforming the way we interact with devices and systems. Here are some prominent applications:

Transcription Services

Speech recognition has streamlined the transcription process, making it faster and more efficient. It has become an invaluable tool for medical, legal, and business professionals, saving hours of manual effort.

Voice Assistants

Virtual assistants like Apple's Siri, Amazon's Alexa, and Google Assistant employ speech recognition to understand and respond to user commands. They can perform tasks, answer queries, and control various devices using voice commands.

Accessibility

Speech recognition has significantly improved accessibility for individuals with disabilities. It allows people with motor impairments or visual impairments to interact with computers, smartphones, and other devices using their voices.

Call Centers

Many call centers leverage speech recognition technology to enhance customer service. It enables automated call routing, voice authentication, and real-time speech-to-text conversion for call transcripts.

Dictation Software

Speech recognition has made dictation effortless and accurate. Professionals in various fields, such as writers, journalists, and students, benefit from dictation software that converts spoken words into written text.

Benefits of Speech Recognition

Speech recognition offers several advantages that make it a powerful technology:

Increased Productivity

Speech recognition enables faster and more efficient data entry, transcription, and command execution, enhancing productivity in various domains.

Accessibility and Inclusivity

By allowing individuals with disabilities to interact with devices using their voices, speech recognition promotes inclusivity and equal access to technology.

Hands-Free Operation

With speech recognition, users can perform tasks without the need for manual input, making it ideal for situations where hands-free operation is necessary or convenient.

Multilingual Support

Advanced speech recognition systems can recognize and transcribe multiple languages, facilitating communication in diverse linguistic contexts.

Understanding Voice Recognition

Voice recognition, also known as speaker recognition or voice authentication, is a technology that focuses on identifying and verifying the unique characteristics of an individual's voice. It aims to determine the identity of the speaker, rather than convert speech into text.

How does Voice Recognition Work?

Voice recognition systems employ sophisticated algorithms and machine learning techniques to analyze various vocal features, such as pitch, tone, rhythm, and pronunciation. Let's explore the process:

Enrollment:In the enrollment phase, the system records a sample of the user's voice, capturing their unique vocal characteristics.

Feature Extraction:The system extracts specific features from the recorded voice sample, analyzing factors like pitch, speech rate, and spectral patterns.

Voiceprint Creation:Using the extracted features, the system creates a unique voiceprint, which serves as a reference for future authentication.

Authentication: When a user attempts to authenticate, their voice is compared to the stored voiceprint. The system assesses the similarity and determines whether the speaker's identity matches the enrolled voiceprint.

Decision: Based on the comparison results, the voice recognition system makes a decision, either granting or denying access.

How is The Voice Recognition Model Trained?

Training a voice recognition model requires a dataset that encompasses audio samples from different individuals, capturing the unique vocal characteristics that differentiate one person from another. The dataset used for training a voice recognition model typically consists of the following components:

Enrolled Voice Samples:The dataset includes voice samples from individuals who voluntarily enroll in the system. These samples serve as the reference or template for each individual's voiceprint. The enrollment process involves recording a set of voice samples from each person.

Test Voice Samples:Along with enrolled voice samples, the dataset also includes separate voice samples for testing and evaluation purposes. These samples are used to assess the model's accuracy and performance in recognizing and verifying the identity of speakers.

The training process for a voice recognition model involves the following steps:

Feature Extraction:From the enrolled voice samples, specific features are extracted to capture the unique vocal characteristics of each individual. These features may include pitch, speech rate, formant frequencies, spectral patterns, and other relevant acoustic properties.

Voiceprint Creation: Using the extracted features, a voiceprint or voice template is created for each individual. The voiceprint represents a unique representation of an individual's voice characteristics.

Training the Model: The model is trained using the enrolled voiceprints as the training data. The model learns to analyze and identify the distinctive features that differentiate one voiceprint from another. Various machine learning techniques, such as neural networks or Gaussian mixture models, are commonly employed to train the model.

Evaluation and Optimization: After the initial training, the model is evaluated using the test voice samples to assess its accuracy and performance. If the model does not meet the desired performance criteria, it undergoes iterative refinement and optimization. This process may involve adjusting model parameters, incorporating additional training data, or implementing advanced algorithms for better feature extraction and matching.

Decision Threshold Setting: In voice recognition, a decision threshold is set to determine whether a given voice sample matches an enrolled voiceprint. This threshold controls the trade-off between false acceptances (when an impostor is incorrectly accepted) and false rejections (when a genuine user is incorrectly rejected). The threshold is typically adjusted to balance security and usability based on the specific application requirements.

By training the voice recognition model on a dataset that encompasses diverse voice samples and using sophisticated algorithms, the model learns to accurately identify and verify individuals based on their unique vocal characteristics. The iterative refinement and optimization process ensures that the model achieves higher accuracy and robustness in real-world scenarios.

Applications of Voice Recognition

Voice recognition technology finds numerous applications in our daily lives. Here are some notable examples:

Security Systems

Voice recognition enhances security by providing an additional layer of authentication. It is employed in biometric systems, access control, and voice-based password systems.

Personalized Services

Voice recognition enables personalized services in various domains. For instance, smart homes can recognize residents' voices and customize settings accordingly.

Automotive Industry

Voice recognition is increasingly integrated into cars, allowing drivers to control various functions hands-free. It enhances safety and convenience on the road.

Voice Banking

Some financial institutions utilize voice recognition for secure and convenient banking transactions. Customers can access their accounts and make transactions using their voices.

Forensic Investigations

Voice recognition assists forensic investigators in analyzing recorded voices, identifying suspects, and providing evidence in criminal cases.

Benefits of Voice Recognition

Voice recognition offers several advantages that make it a valuable technology:

Strong Authentication

Voice recognition provides a robust authentication mechanism since each person has a unique voiceprint, making it difficult to forge or replicate.

Convenience and Speed

With voice recognition, users can authenticate themselves or perform tasks quickly and conveniently using their voices, eliminating the need for manual input.

Natural Interaction

Voice-based interfaces facilitate natural and intuitive interaction with devices, creating a more user-friendly experience.

Versatility

Voice recognition can be integrated into various devices and systems, offering flexibility and adaptability across different applications.

Speech Recognition vs. Voice Recognition: The Key Differences

While speech recognition and voice recognition are closely related, there are significant differences between the two technologies. Let's explore the key distinctions:

Purpose

Speech recognition focuses on converting spoken language into written text, enabling transcription and text-based analysis. In contrast, voice recognition aims to identify and authenticate individuals based on their unique vocal characteristics.

Output

Speech recognition generates written text as its output, facilitating transcription, data entry, and text-based analysis. Voice recognition, on the other hand, produces an authentication decision or performs actions based on the recognized voice.

Application

Speech recognition finds applications in transcription services, virtual assistants, accessibility tools, and dictation software. Voice recognition is utilized for security systems, personalized services, automotive applications, and voice banking.

Technology

Speech recognition heavily relies on natural language processing, acoustic modeling, and language modeling techniques. Voice recognition relies on signal processing, feature extraction, and speaker verification algorithms to identify and authenticate individuals based on their unique vocal characteristics.

Accuracy Requirements

Speech recognition systems strive for high accuracy in transcribing spoken language. However, they can tolerate some errors as long as the overall meaning is preserved. In contrast, voice recognition systems require high accuracy in identifying the speaker's identity to ensure robust authentication.

Training and Enrollment

Speech recognition systems typically do not require specific training or enrollment for users. They can adapt to different voices and accents. Voice recognition systems, on the other hand, require users to enroll their voices initially to create a unique voiceprint for authentication.

FutureBeeAI is Here to Help You With Both!

Both speech recognition and voice recognition are advanced technologies with the ability to transform numerous industries across various applications. If you are involved in the development of either of these technologies, FutureBeeAI can assist you in obtaining a wide range of diverse and unbiased speech datasets in any language of your choice!

Feel free to explore our datastore, where you can find pre-built speech datasets for general conversations, call center interactions, or scripted monologues in over 40 languages. Let's further discuss your specific requirements for a training data pipeline.

Speech recognition, also known as automatic speech recognition (ASR), is a technological marvel that enables computers to convert spoken language into written text. It involves the process of analyzing audio input, extracting the spoken words, and transforming them into written form. Speech recognition systems utilize sophisticated algorithms and language models to achieve accurate transcription.

Speech recognition, also known as automatic speech recognition (ASR), is a technological marvel that enables computers to convert spoken language into written text. It involves the process of analyzing audio input, extracting the spoken words, and transforming them into written form. Speech recognition systems utilize sophisticated algorithms and language models to achieve accurate transcription.