Did you know the average person spends six minutes of time during a call center conversation with an agent? And the average call center takes 4,400 calls a month, 1,000 in a week, and misses 48.

The amount of engagement a call center receives is massive, but the real struggle is to satisfy each of these interactions and ensure customer satisfaction. Call center agents work round the clock to address customers’ queries before they disconnect because of the long waiting queue.

Yes, the customers have become extremely demanding and wouldn’t accept a waiting time of more than 46 to 75 seconds for the business representative to answer.

This is where the major challenge for a call center lies. The organizations are in a constant quest to address as many calls as possible and capture as much data as they can.

Now, imagine what will be the capacity of a call center organization that can store and analyze this customer interaction data and plan a strategy based on the results. The possibilities are endless!

Call centers would be able to capture valuable insights into customer preferences, activity, and behavior. Further, based on the evaluation, they can improve the customer experience.

But do you know which technology is really changing the way we can address this problem? It is Conversational AI and Automatic Speech Recognition (ASR)! Before we explore the impact of ASR in contact centers in detail, let us quickly explore conversational AI in brief.

The Obvious Inclination Towards Conversational AI

In 2023, many commercial sectors have started using artificial intelligence and machine learning to elevate customer service and call center operations. The inclination is purely because of the consistent customer experience and satisfaction the technologies offer, which further helps organizations improve their operations.

Amidst this adoption of AI, one aspect stands out—Conversational AI. It's cutting-edge technology that goes beyond traditional customer interactions, enabling businesses to engage with their customers in a remarkably intuitive and human-like manner. By harnessing the power of automatic speech recognition (ASR), natural language processing (NLP), and machine learning, conversational AI offers personalized and efficient support, paving the way for extraordinary customer experiences.

Conversational AI has found numerous use cases within call centers, revolutionizing the way customer interactions are handled. Over the past few years, we’ve all seen “Let’s chat!” buttons on almost every website. These chatbots and virtual assistants powered by conversational AI are deployed to handle a wide range of customer inquiries, from simple queries to complex troubleshooting. These AI-powered agents can swiftly provide information, guide customers through processes, and even facilitate transactions, all with a human-like touch. They operate 24/7, ensuring round-the-clock support and reducing customer wait times.

But when it comes to assisting users with voice interactions, a key player enters the picture: automatic speech recognition (ASR). ASR technology bridges the gap between customers' spoken language and the capabilities of conversational AI systems. Let's now examine how conversational AI responds to any customer inquiry and how ASR plays a key role in this process.

AI-enabled Customer Service Flow and the Importance of ASR in It

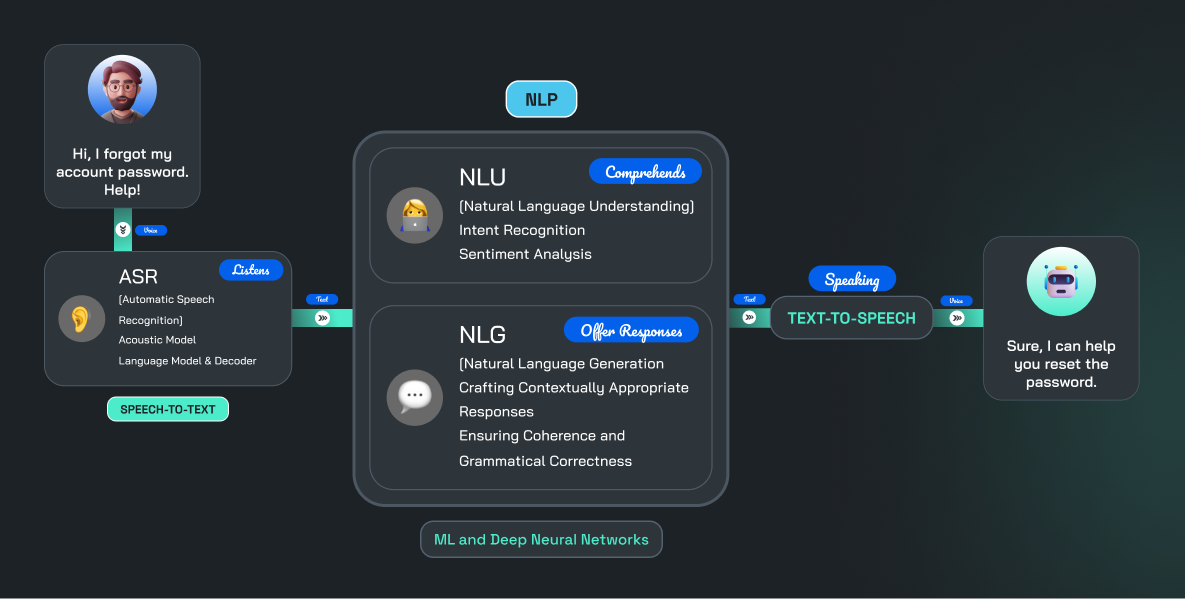

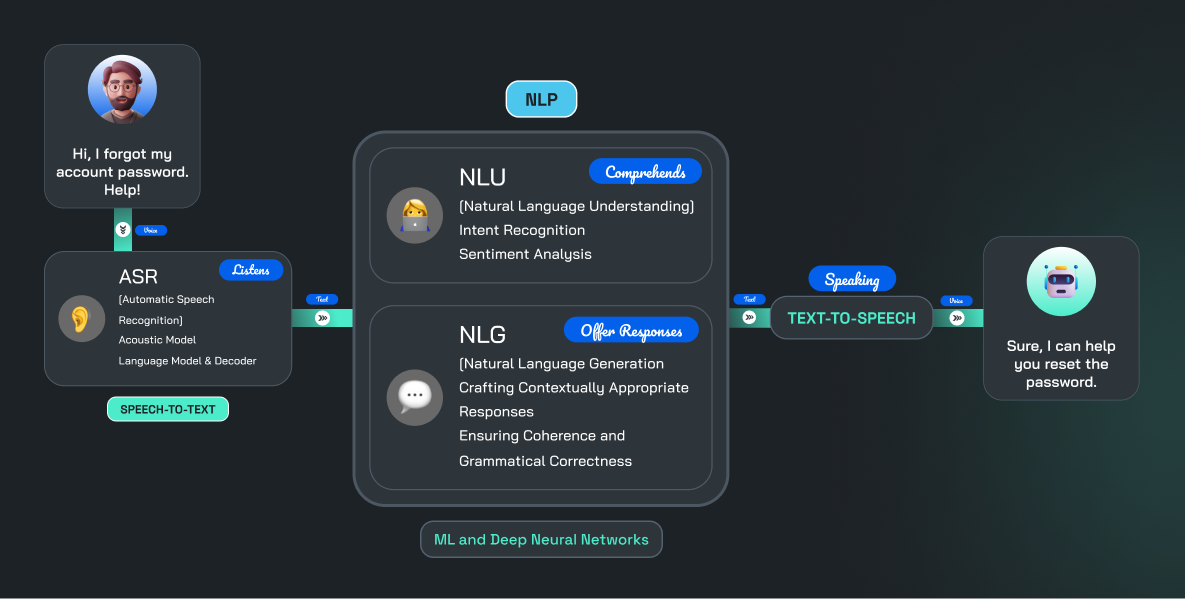

The flow of AI-enabled customer service begins when a customer initiates a voice interaction, whether through a phone call or a voice-enabled device. In this initial stage, automatic speech recognition (ASR) plays a vital role by accurately transcribing the customer's spoken words into written text. This transcription step enables subsequent AI systems to effectively process and understand the customer's intent.

The flow of AI-enabled customer service begins when a customer initiates a voice interaction, whether through a phone call or a voice-enabled device. In this initial stage, automatic speech recognition (ASR) plays a vital role by accurately transcribing the customer's spoken words into written text. This transcription step enables subsequent AI systems to effectively process and understand the customer's intent.

What is Automatic Speech Recognition (ASR)?💡

ASR, or Automatic Speech Recognition, is an AI technology that converts spoken language into written text.

Once the customer's voice input is transformed into text format, the next stage involves natural language processing (NLP), which encompasses natural language understanding and generation algorithms. These components analyze the transcribed text, extracting the customer's intent, context, and specific requests. They comprehend the customer's query and gather the necessary information to generate relevant and accurate responses. Subsequently, a text-to-speech model is employed to convert the generated response back into voice format for delivery to the customer.

ASR is a crucial element within the customer assistance model, alongside other essential components. It plays a pivotal role in transforming audio data, often referred to as 'dark data,' into meaningful information by converting speech into text. This conversion opens up a world of possibilities, as the transcribed text can be further processed and analyzed in various ways, enabling meaningful operations and insights. ASR acts as the gateway, allowing businesses to extract valuable insights from customer interactions that were previously inaccessible.

By converting spoken language into written text, ASR enables the customer service system to leverage the power of NLP, understanding customer queries, and generating appropriate responses. It empowers conversational AI models to effectively engage with customers, provide accurate assistance, and deliver seamless voice interactions. In this way, ASR serves as a crucial foundation for the entire conversational AI model, unlocking the potential of voice-based customer service and enhancing the overall customer experience.

Modes of AI-enabled Call Center Conversation:

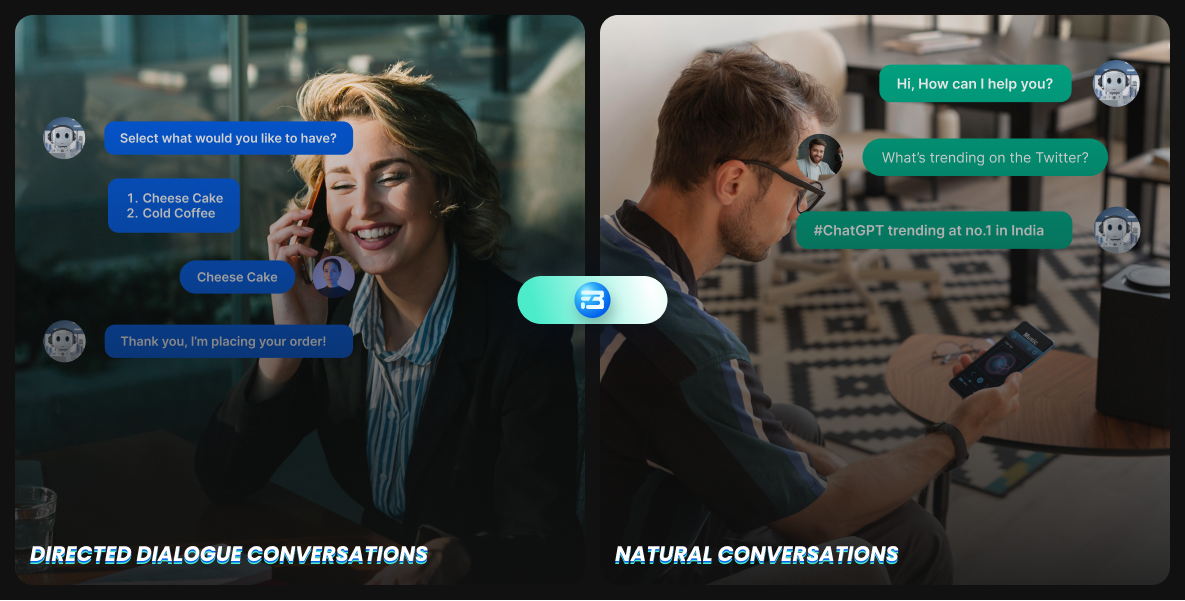

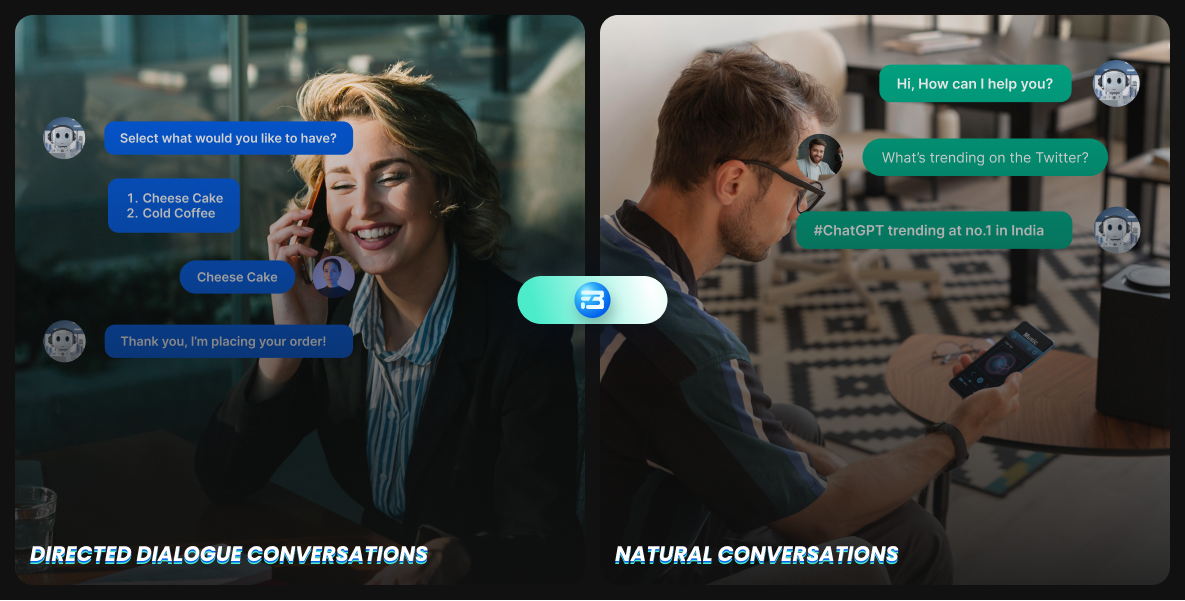

In general, there are two types of conversations that happen after implementing conversational AI in the call center. Let’s explore how ASR is an integral part of each.

1. Direct dialogue conversations: In this case, users connect with the system, and the machines ask them to respond using a specific word or words based on the request made.

1. Direct dialogue conversations: In this case, users connect with the system, and the machines ask them to respond using a specific word or words based on the request made.

Here is what a directed dialogue call center conversation will look like:

VoiceBot: How can I assist you with your card? For billing inquiries, say "billing."

Customer: Billing.

VoiceBot: Please provide your 16-digit card number.

Customer: 1234 5678 9012 3456.

VoiceBot: Your latest statement shows a balance of $250.32, due on June 15th. The minimum payment amount is $25.50. Is there anything else I can help with?

Customer: No, thank you.

ASR System: You're welcome! Have a great day!

Along with assisting customers’ basic queries with direct dialogue conversation, it can be really helpful in the smart routing of calls. For this ASR model, transcribe the customers’ voice input, and that text will be fed to the NLP model. The NLP model can then identify keywords or specific phrases that help determine the nature of the inquiry. This allows for automated and intelligent call routing, ensuring that customers are quickly connected to the appropriate department or agent with the relevant expertise, minimizing transfer times, and improving overall call center efficiency.

2. Natural Conversations: Natural conversations refer to interactions between customers and automated systems that mimic human-like conversations, allowing for more free-flowing and intuitive communication.

In natural conversation, people often exhibit speech phenomena such as hesitations, repetitions, filled pauses (like "um" or "uh"), and false starts.

Natural Conversations enable customers to engage with the automated system using their own words and conversational style, rather than being constrained by pre-determined menu options or specific keywords.

Here is what a natural conversation may look like:

VoiceBot: Welcome to XYZ Travel Agency. How can I assist you today?

Customer: I'm planning a tropical vacation. Any recommendations?

VoiceBot: Sure! Hawaii, the Maldives, and Costa Rica are popular choices. Any preference?

Customer: Hawaii. Best time to visit and must-see attractions?

VoiceBot: April to September offers the best weather. Must-see attractions include Waikiki Beach, Na Pali Coast, and the Big Island's volcanoes.

Customer: Thank you!

In the case of natural conversation, ASR is there in every interaction, continuously transcribing customers’ voice input and providing transcribed text to the NLP model to generate an appropriate response for that.

Now let’s explore various use cases of ASR in call centers and expand our understanding of how ASR is helping call centers.

How ASR is helping call centers?

Conflict Resolution

In busy call centers, conflicts and customer concerns are bound to happen. Imagine this situation: an upset customer contacts a call center about a recent product issue. Emotions are running high, and finding a successful resolution becomes crucial. This is where automatic speech recognition (ASR) technology comes in, providing powerful tools to help call centers navigate and resolve conflicts effectively.

With ASR, the customer's heated conversation is transcribed accurately in real-time. Not only does it capture the words spoken, but it also picks up on the tone and emotions conveyed during the interaction. This comprehensive record of the conversation allows call center agents to understand the underlying issues more clearly. Armed with this valuable transcription, agents can respond empathetically and address the customer's concerns more effectively, taking proactive steps toward conflict resolution.

But the impact of ASR doesn't stop there. The transcriptions generated by ASR serve as concrete documentation during dispute resolution processes. They provide an objective account of the customer's complaints and the actions taken to resolve the conflict. This invaluable resource helps establish clarity, ensure everyone involved is on the same page, and foster a sense of accountability.

Moreover, ASR supports call center supervisors and quality assurance teams in monitoring and evaluating conflict resolution interactions. By reviewing the transcriptions, supervisors can offer targeted feedback and guidance to agents, improving their conflict resolution skills and promoting ongoing improvement across the call center.

Call Transcription

ASR ensures accurate and efficient transcriptions of customer conversations, eliminating manual transcription. This saves time, reduces errors, and allows call centers to process and analyze more call data. Transcribed text can be stored, organized, and analyzed for valuable insights. Call centers can identify patterns, trends, and customer preferences, enabling data-driven decisions, operational optimization, and improved customer service strategies.

Compliance with the Regulations

ASR technology is valuable for maintaining compliance in call center operations. By using ASR, call centers can automatically transcribe real-time conversations between agents and customers. These transcriptions create detailed logs of interactions, enabling easy search, review, and analysis of customer calls to ensure compliance with regulations and internal policies.

These transcriptions also serve as a comprehensive audit trail for compliance purposes. The high accuracy of capturing conversation details helps call centers to monitor protocol violations or mishandling of customer information. It provides an efficient way to monitor calls and identify potential compliance issues.

Self-service

With ASR, customers no longer need to navigate endless menus or wait on hold. They can just speak their query, and ASR converts it into written text, making self-service effortless.

ASR-powered self-service systems provide prompt and accurate responses, eliminating long pauses and frustrating delays. This reduces the need for human intervention, allowing call center agents to focus on complex customer issues.

ASR transforms routine queries into autonomous self-service interactions. Customers can easily find solutions and access information, while agents handle specialized concerns.

ASR adds convenience and excitement to self-service, offering voice interactions, rapid responses, and customer independence. It creates a delightful journey that keeps customers engaged and satisfied.

Ensures call étiquettes

Repeated distress calls from customers can strain the relationship between agents and customers. Implementing automatic speech recognition (ASR) allows for the monitoring and recording of every interaction, helping identify areas that need improvement.

ASR technology helps identify instances where agents deviate from scripts or use inappropriate language. This enables call centers to improve customer service quality and maintain a professional image, promoting proper call etiquette among staff members.

ASR still has many other use cases in the scope of speech analytics and customer service where it is doing wonders. But it’s time to discuss some of the common challenges that anyone can face while building a robust ASR model for call centers.

Challenges in building an effective ASR model for call centers?

Building an effective automatic speech recognition model for call centers comes with its fair share of challenges. The ability to accurately transcribe and understand customer interactions is crucial for delivering exceptional customer service. However, call centers must navigate several obstacles to develop a robust ASR model tailored to their specific needs.

Diverse Accents and Speech Patterns

Building an effective Automatic Speech Recognition (ASR) model for call centers is a major challenge due to the diverse accents and speech patterns of customers. Call centers serve people from various regions, each with their own unique accents, dialects, and speech nuances. These differences in pronunciation, intonation, and rhythm make it difficult for ASR systems to accurately transcribe and understand customer interactions.

Accents can vary widely, not only between different countries but also within regions of the same country. They can be influenced by factors such as cultural background, native language, regional dialects, or individual speech habits. ASR models trained on a specific accent or dialect may struggle to accurately recognize and transcribe speech from customers with different accents.

Moreover, speech patterns can vary significantly among individuals. Some people speak quickly, while others have a slower pace. Some individuals may mumble or speak softly, making it more challenging for ASR systems to accurately capture their speech.

Industry-Specific Vocabulary and Terminology

Call centers operate in various sectors like finance, healthcare, retail, telecom, and more. Each industry has its own specific language with unique vocabulary, terms, acronyms, and jargon. These industry-specific terms might be unfamiliar to a regular speech recognition system, resulting in lower accuracy when transcribing and understanding customer interactions.

To train an accurate speech recognition model for industry-specific vocabulary, extensive and representative speech datasets are needed. These datasets should include the specialized language used in the target industry while covering a wide range of accents, speech patterns, and variations. However, acquiring such datasets can be challenging.

Noisy Environments

Call centers can be noisy due to various sources like agent conversations, office equipment, and external factors. Background noise can also come from the customer's side, and we're unsure of their location. Therefore, there's a high chance of encountering background noise during customer service interactions.

This noise can greatly affect the accuracy of the ASR system, leading to transcription errors and overall performance issues. Generic ASR models may not be trained to handle speech data with background noise, making it difficult for them to understand speech in noisy environments.

Call Center-Specific Acoustics

Call centers have unique acoustics that can affect the accuracy of ASR systems. Factors like different headsets, varying microphone quality, and room setups contribute to the specific acoustics found in call center environments. To ensure optimal performance, the ASR model should be trained and optimized to handle the specific acoustics commonly encountered in call centers.

Limited Training Data Availability

Limited training data availability can be a very complex issue that hinders the process of building call center-specific ASR. Obtaining a diverse, representative, and high-quality dataset that captures the wide range of customer interactions in call centers requires significant time and resources.

Along with that, manually transcribing and labeling a large volume of speech data is a labor-intensive task. It involves skilled transcriptionists or domain experts to accurately annotate the data, which can be costly and time-consuming.

Additionally, call center interactions span different domains like banking, insurance, or healthcare, each with its own unique vocabulary and terminology. To build an ASR model that accurately recognizes and transcribes these specific interactions, a dataset containing an ample number of call center-specific conversations is necessary. However, limited access to such domain-specific data can make it challenging to train an ASR model that performs well in call center scenarios.

FutureBeeAI can be a One-stop Solution for you!

Overall, ASR is a powerful technology that can be used to improve the efficiency and productivity of call centers. ASR can help reduce costs by automating tasks that would otherwise be done by human agents. This can include tasks such as call routing, transcription, and keyword identification. It can help improve customer service by providing customers with a more efficient and personalized experience. No one can agree more on that!

But but but, acquiring a representative and high-quality dataset for building a robust ASR model for call centers can be complex. However, FutureBeeAI is here to help. We offer ready-to-deploy call center speech datasets for major industries such as healthcare, retail, delivery and logistics, real estate, and more, in over 30 languages.

If your speech dataset requirements are different, we can assist you with a custom speech dataset collection in over 50 languages. Our global community of expert individuals can provide you with representative, unbiased, and close-to-real-life call center speech datasets.

With our time-proven SOPs and state-of-the-art tools, we can streamline your speech data collection process. Whether you're in the early stages of building your call center ASR model or further along, our speech data experts are ready to help you overcome any training data-related challenges. Get in touch with us and say goodbye to your training data problems.

The flow of AI-enabled customer service begins when a customer initiates a voice interaction, whether through a phone call or a voice-enabled device. In this initial stage, automatic speech recognition (ASR) plays a vital role by accurately transcribing the customer's spoken words into written text. This transcription step enables subsequent AI systems to effectively process and understand the customer's intent.

The flow of AI-enabled customer service begins when a customer initiates a voice interaction, whether through a phone call or a voice-enabled device. In this initial stage, automatic speech recognition (ASR) plays a vital role by accurately transcribing the customer's spoken words into written text. This transcription step enables subsequent AI systems to effectively process and understand the customer's intent. 1. Direct dialogue conversations: In this case, users connect with the system, and the machines ask them to respond using a specific word or words based on the request made.

1. Direct dialogue conversations: In this case, users connect with the system, and the machines ask them to respond using a specific word or words based on the request made.