How much disagreement is acceptable in TTS evaluation?

TTS

Quality Assessment

Speech AI

Disagreement in Text-to-Speech (TTS) evaluation is not just a minor inconvenience but a crucial indicator of your model's performance and its alignment with user expectations. Ignoring these disagreements can lead to models that sound perfect on paper but fail in real-world applications. To effectively harness the insights disagreement offers, it's essential to understand its types and implications.

Why Disagreement Matters

Disagreement serves as a lens to uncover hidden issues in your TTS model. It can highlight whether your evaluation tasks are ambiguous, if there are differences in listener backgrounds (such as native versus non-native speakers), or if the evaluation dimensions are inadequate. For instance, a model might sound natural to one group but robotic to another. Recognizing these variances allows you to refine evaluation processes and achieve more robust outcomes.

Types of Disagreement in TTS Evaluation

Expected Disagreement: Expected disagreement occurs when evaluators show some variance in their scores but agree on the overall outcome. Picture a paired comparison where one TTS voice scores 7.5 and another 8.0 among different listeners. This level of variance typically indicates subjective preferences rather than fundamental model flaws. It reflects the inherent subjectivity in human perception and is generally acceptable.

Concerning Disagreement: This type arises when evaluators' opinions split significantly by subgroup, such as native versus non-native speakers. Imagine if a model is rated highly by native speakers but poorly by non-natives. Such a split suggests that the model may not generalize across user demographics or meet the needs of diverse user segments. This kind of disagreement is a red flag indicating the need for model adjustments to ensure broader appeal.

Invalid Disagreement: Invalid disagreement is characterized by random patterns or low evaluator engagement. If scores vary without a discernible reason, it might suggest evaluator fatigue or poorly designed tasks. For instance, if evaluators provide inconsistent ratings without clear rationale, it's a sign to revisit task design and evaluator selection.

How to Handle Disagreement Effectively

Ensure that evaluation tasks are well-defined and evaluators know what constitutes quality. For example, when assessing naturalness, provide concrete examples and clear criteria for what "natural" should sound like.

Engage a mix of native and non-native speakers to uncover potential biases or weaknesses in your TTS model. This diversity can help create a more universally appealing product by highlighting areas that need improvement.

Regularly review evaluation data to pinpoint persistent disagreement patterns. Are there recurring issues with prosody, pronunciation, or emotional tone? Identifying these patterns enables targeted improvements in specific model aspects.

Practical Takeaway

Embrace disagreement as an invaluable source of insight rather than a problem to be eliminated. By understanding the various types of disagreement and implementing strategies to manage them, you can fine-tune your TTS models to meet the diverse needs of users. Remember, the goal is to leverage disagreement as a tool for continuous improvement rather than aiming for its complete eradication. This approach ensures your TTS models not only perform well technically but also resonate with users in real-world scenarios. If you have any questions or need further assistance, feel free to contact us.

What Else Do People Ask?

Related AI Articles

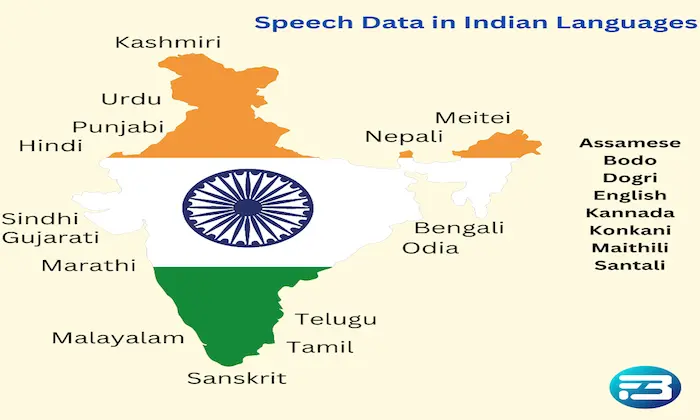

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!