What red flags indicate a weak evaluation provider?

Evaluation

Quality Assurance

Technical Assessment

In AI development, particularly for Text-to-Speech (TTS) systems, the quality of your evaluation partner can significantly influence whether a model succeeds in real-world deployment. Selecting the wrong provider may result in misleading results, creating a false sense of readiness for systems that still contain hidden flaws.

A reliable evaluation provider acts as a guide throughout the model lifecycle. Identifying weaknesses in a provider early helps teams avoid costly mistakes later.

Why Identifying Weak Evaluation Providers Matters

An ineffective evaluation partner may rely on limited testing methods or incomplete analysis. This can cause teams to deploy models that perform well in controlled testing environments but fail in real user interactions.

For example, a TTS model might receive high scores in simplified evaluation tasks but still sound unnatural when used in real conversations. Recognizing warning signs in evaluation providers helps ensure that model assessments reflect actual user experience.

Common Signs of Weak Evaluation Providers

1. Limited Evaluator Diversity: A reliable evaluation process requires feedback from diverse listeners. If a provider uses a narrow or homogeneous evaluator group, critical insights may be missed. For instance, a globally deployed TTS system evaluated primarily by a single language group may fail to capture regional pronunciation nuances or cultural expectations.

2. Inconsistent Evaluation Methodologies: Evaluation providers should apply clear and consistent methodologies across projects. Providers that frequently change evaluation criteria or rely heavily on a single metric such as Mean Opinion Score (MOS) may produce unreliable results. Comprehensive evaluation should include multiple assessment methods that capture different aspects of speech quality.

3. Ignoring Evaluator Disagreement: Differences in evaluator opinions often reveal important signals about model performance. Providers who treat disagreement as noise rather than insight risk overlooking issues such as inconsistent prosody or varying listener expectations. An effective evaluation process analyzes these disagreements to uncover deeper insights.

4. Lack of Continuous Monitoring: Evaluation should not end once a model is deployed. Reliable providers offer post-deployment monitoring to detect silent regressions and performance drift. Without continuous evaluation, small model updates or changes in input data may degrade speech quality over time.

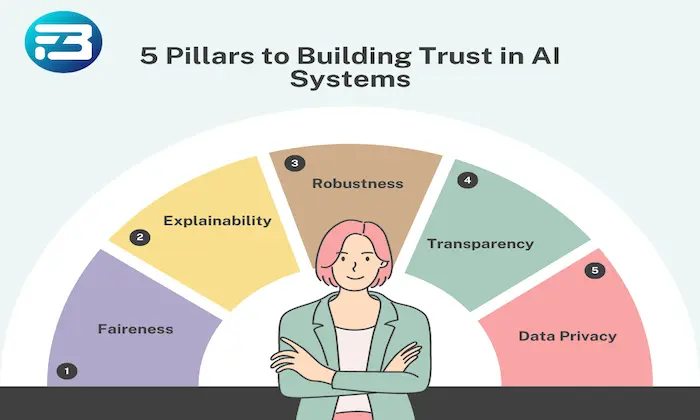

5. Limited Transparency: Transparency is essential in evaluation workflows. If a provider cannot clearly explain their evaluation methods, evaluator selection process, or quality control mechanisms, it may indicate weak internal practices. Transparent reporting builds confidence in evaluation results.

How to Choose a Reliable Evaluation Partner

When evaluating potential providers, teams should prioritize partners that demonstrate methodological clarity, evaluator diversity, and structured evaluation frameworks. A strong evaluation partner will also offer ongoing monitoring to ensure models remain reliable after deployment.

Organizations such as FutureBeeAI emphasize these principles by combining structured methodologies, diverse evaluator panels, and continuous evaluation pipelines. This approach helps ensure that TTS systems perform reliably across real-world scenarios.

Practical Takeaway

Choosing the right evaluation provider is a strategic decision that directly affects the success of AI systems. By identifying warning signs such as limited evaluator diversity, inconsistent methodologies, lack of transparency, and absence of continuous monitoring, teams can avoid unreliable evaluations and build more dependable models.

A strong evaluation partner helps teams move beyond surface-level testing and develop speech systems that perform consistently and naturally for real users.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!