Why does paired comparison scale poorly without crowds?

Data Analysis

Market Research

Machine Learning

Imagine evaluating a painting by looking through a keyhole. You might notice a striking color or a beautiful brushstroke, but the overall composition remains hidden. This metaphor captures the limitation of paired comparison methods in AI model evaluation. While comparing two options directly can highlight clear preferences, it rarely provides the broader perspective needed for reliable large-scale evaluation.

This challenge becomes especially visible in systems like TTS (Text-to-Speech), where subtle perceptual differences shape user experience.

The Bias Problem in Small Evaluator Groups

Paired comparison tasks depend heavily on evaluator perception. When evaluations rely on a small group of participants, personal bias can easily influence outcomes.

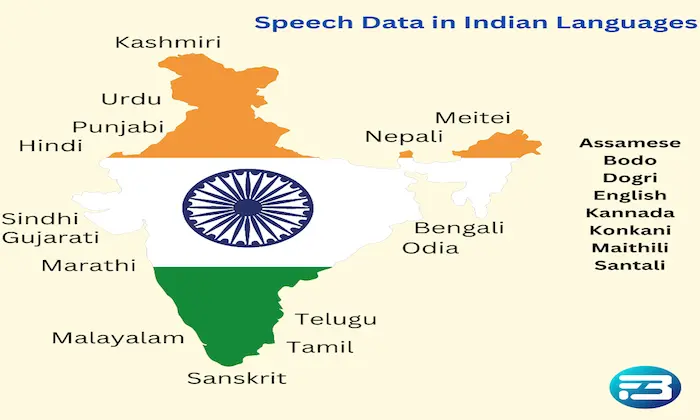

Each evaluator brings their own linguistic background, expectations, and listening habits. For example, someone unfamiliar with certain accents may judge a TTS model as unnatural even if it correctly represents a regional dialect.

This is where crowdsourcing becomes essential. A diverse evaluator pool balances individual biases and produces a more representative assessment of how users will perceive the system.

Why Small Panels Fail at Scale

A common misconception in model evaluation is that a small expert panel can capture all meaningful insights. In reality, limited evaluator groups often miss contextual nuances that appear in broader user populations.

Consider evaluating a TTS system designed for healthcare environments. A single evaluator might overlook pronunciation issues related to medical terminology. However, a diverse panel that includes healthcare professionals and general users can identify both technical inaccuracies and usability concerns.

Scaling evaluations with broader participation ensures models are tested against realistic usage expectations rather than narrow viewpoints.

What Paired Comparisons Miss

While paired comparisons are useful for determining preference between two options, they often fail to explain why one option is preferred.

Without structured input from diverse evaluators, important perceptual issues may remain hidden. These can include:

Unnatural pause placement

Slightly incorrect stress patterns

Subtle pronunciation inconsistencies

Emotionally flat delivery in conversational contexts

These details may seem minor during evaluation but can significantly affect user satisfaction once the system is deployed.

Strategies for Scaling Paired Comparison Evaluations

Diverse Evaluator Pools: Include participants from varied linguistic, cultural, and professional backgrounds to capture a broader range of user perceptions.

Structured Feedback Rubrics: Pair preference judgments with attribute-level feedback such as naturalness, prosody, and clarity to uncover the reasons behind evaluator choices.

Iterative Evaluation Rounds: Run multiple evaluation cycles with different evaluator groups to detect hidden biases, model regressions, or contextual weaknesses.

Practical Takeaway

The purpose of model evaluation is not simply to declare a winning model version. The real goal is to extract insights that guide meaningful improvements.

By combining paired comparisons with large-scale crowd participation, organizations reduce the risk of false confidence, where models appear successful in limited testing but fail in real-world deployment.

At FutureBeeAI, evaluation frameworks integrate crowd intelligence with structured methodologies to ensure AI systems are tested under diverse, realistic conditions. If you are looking to strengthen your evaluation pipeline or integrate large-scale perceptual testing, you can contact us to explore tailored solutions.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!