When should MUSHRA be used instead of MOS for TTS models?

TTS

Audio Evaluation

Speech AI

In the field of Text-to-Speech (TTS) evaluation, selecting the appropriate assessment methodology plays a critical role in determining how accurately a model’s performance is measured. Among the most commonly used evaluation methods are Mean Opinion Score (MOS) and MUSHRA (Multiple Stimuli with Hidden Reference and Anchor). While both rely on human listeners, they serve different purposes and provide different levels of insight.

Understanding when to use each method helps teams make better decisions about model quality and readiness for deployment.

Understanding MOS: A Broad Quality Snapshot

Mean Opinion Score (MOS) is one of the simplest and most widely used evaluation methods in speech technology. In MOS evaluations, listeners rate the quality of individual audio samples on a numerical scale, typically ranging from 1 (poor) to 5 (excellent).

Because of its simplicity, MOS provides a quick overview of how natural or intelligible a TTS output sounds. This makes it useful in early-stage development when teams need a general understanding of model performance.

However, MOS has limitations. Since listeners evaluate samples independently rather than comparatively, subtle differences between models or voice styles may not be captured effectively. As a result, MOS is better suited for high-level assessments rather than detailed diagnostic analysis.

Understanding MUSHRA: A Detailed Comparative Method

MUSHRA is designed for more precise audio quality evaluation. In this method, listeners evaluate multiple audio samples at the same time, including a hidden reference (the highest-quality version) and an anchor (a deliberately degraded sample).

By comparing samples side-by-side, evaluators can identify subtle differences in speech quality, expressiveness, and naturalness. This structure allows for more granular feedback than MOS.

Because listeners directly compare outputs, MUSHRA helps highlight which model performs better and why. This makes it especially valuable for fine-tuning models or comparing multiple voice variants.

Key Differences Between MOS and MUSHRA

Evaluation Structure: MOS evaluates samples individually, while MUSHRA presents several samples together for comparison.

Level of Detail: MOS offers a general quality score, whereas MUSHRA provides deeper insight into subtle quality differences.

Use Case: MOS is useful for quick assessments during early development stages. MUSHRA is better suited for detailed analysis when refining models or selecting between similar voice options.

When to Use MUSHRA in TTS Evaluation

1. High-Quality Voice Systems: Applications such as audiobooks, virtual assistants, and voice branding require natural and expressive speech. MUSHRA helps detect subtle issues in prosody and emotional tone that MOS might overlook.

2. Comparing Multiple Voices or Model Versions: When teams need to choose between several TTS voices or model iterations, MUSHRA’s side-by-side comparison framework makes differences easier to identify.

3. Detecting Quality Regressions: MUSHRA can also be used during ongoing model monitoring to detect subtle declines in voice quality that might not appear in general metrics.

Practical Takeaway

Both MOS and MUSHRA play important roles in TTS evaluation. MOS provides a quick snapshot of perceived speech quality, making it useful during early development phases. MUSHRA, however, offers the deeper analysis required to refine models and evaluate subtle differences in speech synthesis.

Many AI teams combine both approaches. MOS helps filter early candidates, while MUSHRA supports final decision-making when selecting production-ready voices.

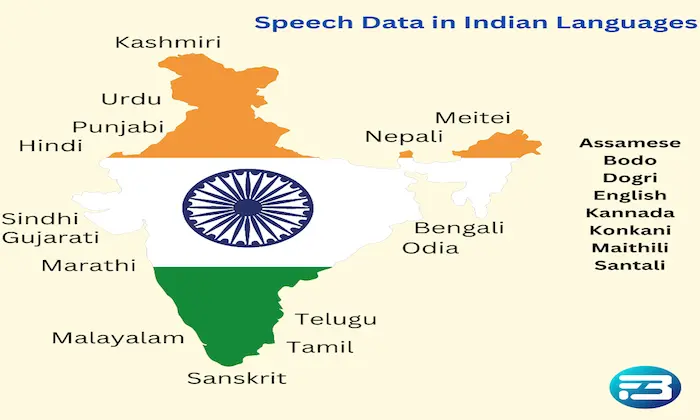

Organizations working on advanced speech systems often rely on structured evaluation frameworks and high-quality datasets to support these processes. Companies such as FutureBeeAI provide evaluation methodologies and curated speech data that help teams implement both MOS and MUSHRA effectively.

Using the right evaluation method ensures that TTS systems not only meet technical benchmarks but also deliver speech that feels natural and engaging to real users.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!