How does the platform handle long-form TTS evaluation?

TTS

Content Evaluation

Speech AI

Evaluating long-form Text-to-Speech (TTS) systems requires more than checking technical metrics. It is a layered process that focuses on how speech performs over time, how it feels to listeners, and whether it sustains engagement across extended interactions.

In use cases like audiobooks, virtual assistants, and educational content, performance is not judged in seconds but in minutes or hours. A voice that sounds acceptable in short clips can quickly become monotonous, unnatural, or fatiguing in long-form scenarios.

Why Long-Form Evaluation Matters

Long-form TTS introduces challenges that short-form testing cannot capture.

Listener Fatigue: Voices that lack variation become tiring over time, reducing engagement.

Emotional Consistency: Maintaining the right tone across long passages is critical for user trust.

Pacing and Flow: Small issues in pauses or rhythm become amplified in extended content.

Without long-form evaluation, teams risk deploying models that pass tests but fail in actual user environments.

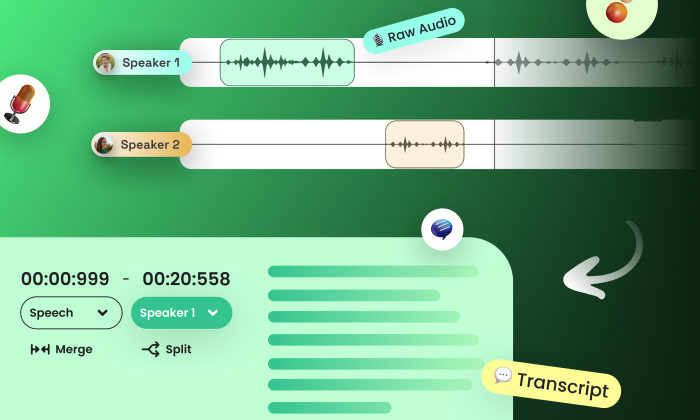

The Structured Stages of Long-Form TTS Evaluation

Prototype Stage: Quickly identify and eliminate weak voice options using coarse evaluation methods like Mean Opinion Score (MOS), while documenting gaps that require deeper validation later.

Pre-Production Stage: Refine performance using native evaluators and structured rubrics to assess prosody, emotional tone, and contextual delivery in greater detail.

Production Readiness: Validate stability through regression testing and confidence-based analysis instead of relying only on average scores, ensuring consistent performance before deployment.

Post-Deployment Stage: Continuously monitor performance through human feedback to detect silent regressions and behavioral drift as the model interacts with real-world data.

Key Evaluation Dimensions

Naturalness: Measures whether the voice maintains a human-like quality throughout extended listening.

Prosody: Evaluates rhythm, stress, and intonation consistency across long passages.

Pronunciation Accuracy: Ensures correct articulation of complex or domain-specific terms.

Engagement: Assesses whether the voice sustains listener interest without causing fatigue.

Common Pitfalls in Long-Form Evaluation

Over-Reliance on Metrics: Automated scores often fail to capture issues like monotony or emotional flatness.

Short-Form Bias: Testing only short clips leads to missed issues that appear over longer durations.

Ignoring Human Feedback: Without listener insights, subtle quality issues remain undetected.

These gaps can result in models that seem effective in testing but struggle in real-world usage.

Practical Takeaway

Effective long-form TTS evaluation requires a multi-stage, human-centered approach. By combining structured methodologies with continuous feedback, teams can ensure their models deliver not just clarity, but sustained engagement and emotional relevance over time.

Conclusion

Long-form TTS evaluation is not a one-time checkpoint but an ongoing process. By aligning evaluation stages with real-world usage and prioritizing human perception, teams can build systems that remain consistent, engaging, and reliable. This approach ensures that TTS models go beyond technical success and truly connect with users.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!