Why does evaluator training matter in TTS assessment?

TTS

Quality Control

Speech AI

In Text-to-Speech evaluation, automated metrics provide useful indicators of system performance, but they cannot fully capture how speech is perceived by real listeners. Human evaluators bridge this gap by assessing qualities such as naturalness, conversational rhythm, and emotional delivery. Proper evaluator training ensures that these assessments are consistent, reliable, and aligned with real user expectations. When teams evaluate Text-to-Speech systems, trained evaluators play a critical role in identifying issues that numerical metrics often miss.

Why Evaluator Training Matters

Human perception of speech is nuanced. Two models may produce similar Mean Opinion Scores, yet listeners may perceive one as more engaging or more natural in conversation. Trained evaluators are able to detect these subtle differences and translate them into actionable feedback for development teams.

Without training, evaluators may apply inconsistent standards when assessing attributes such as clarity, pronunciation, or emotional tone. This inconsistency reduces the reliability of evaluation results and makes it difficult for teams to compare models accurately.

Key Benefits of Evaluator Training

Nuanced Listening and Judgment: Trained evaluators learn to recognize subtle aspects of synthesized speech such as prosodic balance, conversational pacing, and emotional expressiveness. These perceptual insights often reveal issues that automated evaluation cannot detect.

Consistency Across Evaluation Sessions: Training aligns evaluators around clear criteria and scoring guidelines. When evaluators apply the same standards, model comparisons become more reliable and reproducible.

Actionable Feedback for Model Improvement: Evaluators do more than assign scores. Their qualitative observations help engineers identify specific weaknesses in model architecture, speech datasets, or synthesis strategies.

Detection of Subtle Performance Issues: Trained evaluators are better equipped to identify problems such as unnatural pauses, robotic speech patterns, or inconsistent pronunciation that may degrade user experience over time.

Common Pitfalls Without Evaluator Training

Overreliance on Automated Metrics: Metrics such as MOS provide general indicators of quality but often overlook perceptual nuances in speech delivery.

Inconsistent Evaluation Standards: Without shared guidelines, evaluators may interpret evaluation criteria differently, producing unreliable results.

Missed Signals in Evaluator Disagreement: Differences in evaluator opinions can highlight deeper issues in speech synthesis. Untrained evaluators may dismiss these differences rather than investigating them.

Best Practices for Effective Evaluator Training

Structured Evaluation Rubrics: Provide clear guidelines defining attributes such as naturalness, intelligibility, pronunciation accuracy, prosody, and emotional tone.

Calibration Sessions: Conduct regular sessions where evaluators review example outputs together and align on scoring standards.

Ongoing Skill Development: Periodically retrain evaluators to maintain consistency and ensure they remain aligned with evolving evaluation frameworks.

Practical Takeaway

Evaluator training is a fundamental component of reliable TTS evaluation. By equipping evaluators with structured guidelines, listening examples, and ongoing calibration processes, teams can produce evaluation results that accurately reflect real user experience.

This approach ensures that models are not only optimized for benchmark metrics but also deliver natural and engaging speech in real-world applications.

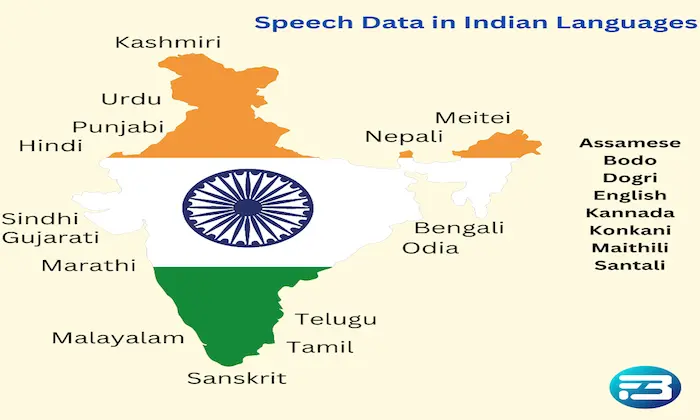

Organizations such as FutureBeeAI support structured evaluation pipelines that combine trained evaluators with scalable data collection workflows. Teams building speech systems can also leverage resources like the FutureBeeAI TTS speech dataset to support robust training and evaluation strategies.

FAQs

Q. What skills should TTS evaluators be trained in?

A. Evaluators should be trained to assess pronunciation accuracy, prosody, naturalness, emotional tone, and contextual appropriateness in synthesized speech outputs.

Q. How can teams maintain evaluator consistency over time?

A. Teams can conduct periodic calibration sessions, provide structured evaluation rubrics, and implement retraining programs to ensure evaluators apply consistent standards during assessments.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!