How do we ensure long-term trust?

Trust Management

Technology Solutions

User Engagement

Trust in AI systems forms the backbone of their adoption and success. Consider the scenario where a well-regarded AI solution suddenly starts delivering inconsistent outcomes. Users are likely to lose confidence, leading to underutilization and skepticism. To prevent such trust failures, AI practitioners must adopt a strategic, multifaceted approach to ensure their solutions remain reliable and user-centric over time.

Why Trust Matters for AI Success

For AI engineers, product managers, researchers, and innovation leaders, building trust goes beyond engineering excellence. It directly impacts user engagement, business outcomes, and the long-term viability of AI initiatives. Trust is not a one-time achievement. It is an ongoing process that requires consistency, transparency, and responsiveness.

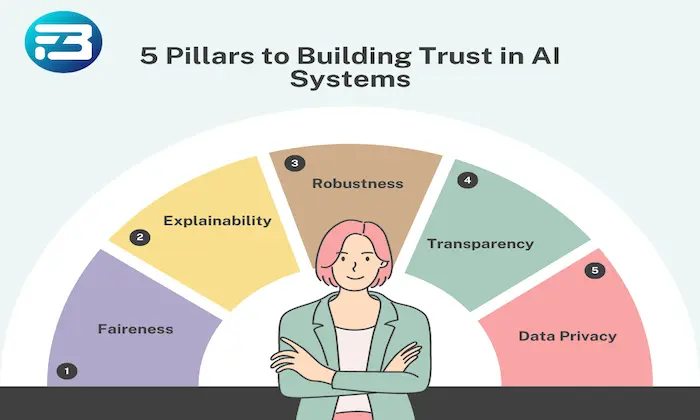

Key Strategies for Building Trust

Ensure Consistency with Rigorous Evaluation: Reliability depends on consistent performance. Implement structured evaluation frameworks such as A/B testing and human evaluation to ensure models align with user expectations. For example, at FutureBeeAI, multi-layered quality checks help detect performance drift and maintain stable outcomes over time.

Foster Transparent Communication: Transparency strengthens user confidence, especially when issues arise. Clearly communicate model limitations, evaluation results, and failure cases. Providing detailed evaluation insights helps users understand how decisions are made and how improvements are being implemented.

Actively Engage with User Feedback: User feedback provides direct insight into real-world performance. Collect feedback through structured channels and integrate it into model improvement cycles. For instance, if users highlight a lack of emotional nuance in a text-to-speech model, addressing this feedback improves both system quality and user trust.

Implement Comprehensive Evaluation Strategies: Relying only on automated metrics can lead to incomplete assessments. Combine automated evaluation with human evaluation to capture perceptual attributes such as naturalness and emotional appropriateness. This ensures a more accurate understanding of model performance.

Proactively Address Bias and Fairness: Bias can significantly impact trust. Regularly audit models to identify issues such as mispronunciations or culturally insensitive outputs. Addressing these proactively ensures fairness and inclusivity, reinforcing user confidence in the system.

Practical Takeaway

Building long-term trust in AI systems requires more than technical accuracy. It requires a structured approach that prioritizes consistency, transparency, user feedback, comprehensive evaluation, and fairness. By embedding these practices into the development lifecycle, teams can create systems that remain reliable and aligned with user expectations over time.

At FutureBeeAI, evaluation frameworks and feedback-driven processes are designed to support this goal, helping teams build AI systems that are both dependable and user-centric.

FAQs

Q. How can transparent communication improve trust in AI systems?

A. Transparent communication helps users understand how AI systems function, including their limitations and performance characteristics. By clearly explaining issues and improvements, organizations can build confidence and demonstrate accountability.

Q. What are effective ways to gather user feedback for AI systems?

A. Effective methods include structured surveys, direct user interviews, feedback forms, and usage analytics. These approaches provide insights into real-world performance and help guide continuous improvement of AI systems.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!