How does domain expertise change model evaluation outcomes?

Model Evaluation

AI Applications

Healthcare

In AI model evaluation, especially for systems like Text-to-Speech (TTS), domain expertise is not an enhancement. It is a multiplier of evaluation quality. Without it, evaluations risk being technically correct but practically irrelevant.

Why Domain Expertise Changes Evaluation Outcomes

Generic evaluation frameworks often miss what truly matters in real-world use. Domain experts bring contextual understanding that aligns evaluation with actual user expectations, use-case requirements, and risk profiles.

They bridge the gap between “model performance” and “real-world effectiveness.”

Where Domain Experts Add Critical Value

Attribute Prioritization: Domain experts know which attributes matter most in a given context. For example, a healthcare TTS system prioritizes pronunciation accuracy and clarity, while a storytelling system emphasizes expressiveness and engagement.

Interpretation of Human Feedback: Human evaluation is inherently subjective. Domain experts can interpret feedback with context, distinguishing between noise and meaningful signals.

Detection of Subtle Failures: Many real-world failures are not obvious. Issues like unnatural stress, incorrect emphasis, or tone mismatch are often detected only by those familiar with domain expectations.

Cultural and Contextual Sensitivity: Domain experts understand how communication norms vary across industries and audiences. This ensures that outputs align with user expectations and avoid misinterpretation.

Structured Feedback Loops: Experts design feedback systems that go beyond identifying issues. They connect problems to root causes and guide improvements that are aligned with domain requirements.

Real-World Impact

Without domain expertise, models may pass evaluation but fail in deployment.

A legal TTS system may sound clear but lack the authority expected in professional communication.

A medical TTS system may be intelligible but mispronounce critical terminology.

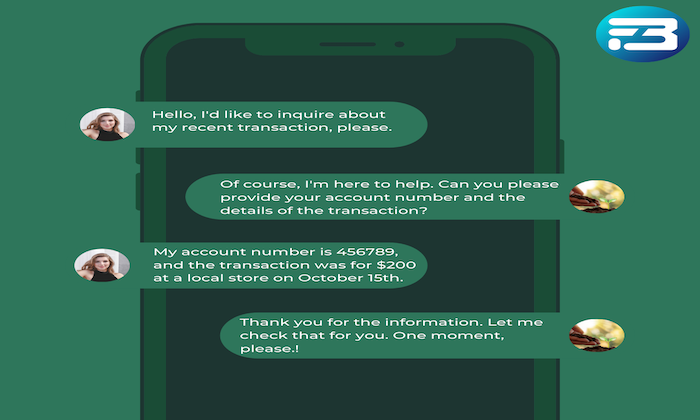

A customer support voice may be accurate but fail to convey empathy.

These are not technical failures. They are contextual failures, and they directly impact user trust and adoption.

Practical Takeaway

Domain expertise ensures that evaluation is aligned with real-world expectations, not just technical benchmarks. It transforms evaluation from a generic scoring process into a context-aware decision system.

By integrating domain experts into evaluation workflows, teams can identify meaningful issues, prioritize the right improvements, and build systems that perform effectively in their intended environments.

At FutureBeeAI, evaluation frameworks incorporate domain-specific expertise to ensure that TTS models are not only technically sound but also contextually accurate and user-aligned. If you are looking to strengthen your evaluation strategy, you can explore tailored solutions through the contact page.

FAQs

Q. Why is domain expertise important in AI model evaluation?

A. Domain expertise ensures that evaluation aligns with real-world use cases, helping identify context-specific issues that generic evaluation methods may miss.

Q. Can domain expertise replace automated evaluation methods?

A. No. Domain expertise complements automated methods. While metrics provide scalable measurement, experts provide contextual interpretation, ensuring a balanced and effective evaluation process.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!