How do evaluation partners handle data privacy?

Data Privacy

Compliance

Data Security

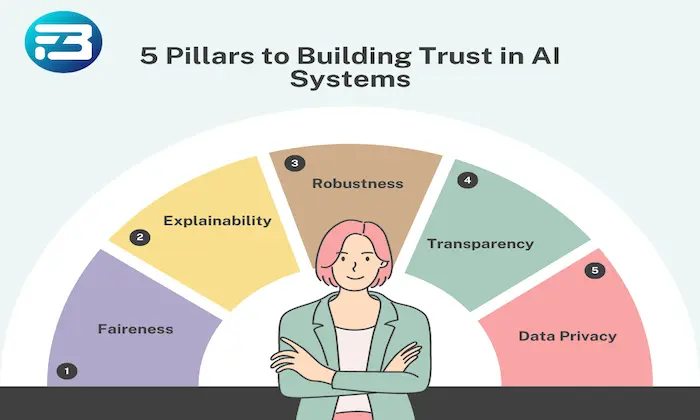

Navigating data privacy in AI evaluations, especially in sensitive areas like TTS, requires a careful balance between extracting meaningful insights and protecting user data. Strong privacy practices are not just about compliance but about maintaining trust while enabling high-quality evaluations.

Why Data Privacy Matters in Evaluation

Data privacy is foundational to sustainable AI systems. Regulations like GDPR and CCPA set the baseline, but real success comes from building user confidence. If users feel their data is unsafe, participation drops, and evaluation quality suffers.

For TTS systems, where audio data can contain sensitive personal signals, privacy becomes even more critical.

Five Essential Techniques for Safeguarding User Data

Anonymization Techniques: Removing personally identifiable information is the first step. Advanced methods like differential privacy or voice masking help preserve evaluation value while protecting identity. This ensures evaluators can assess quality without exposing sensitive details.

Controlled Access: Access should follow the principle of least privilege. Evaluators only see what is necessary for their task. This reduces exposure risk and limits potential misuse of data.

Audit Trails and Metadata Tracking: Every interaction with data should be logged. Tracking who accessed what and when creates accountability and enables fast investigation of anomalies. It also strengthens compliance and internal governance.

Regular Training and Awareness: Evaluators must be trained in privacy practices and ethical handling of data. Ongoing education ensures that privacy is consistently maintained, not just enforced through systems.

Data Minimization: Collect and retain only the data required for evaluation. Reducing unnecessary data exposure lowers risk and simplifies compliance while keeping evaluation focused and efficient.

Practical Takeaway

Effective data privacy in AI evaluation is built on layered safeguards. Combining anonymization, strict access control, monitoring, and continuous training ensures that sensitive data is protected without compromising evaluation quality.

Conclusion

Data privacy is not a constraint on evaluation. It is an enabler of trust and long-term scalability. By embedding strong privacy practices into evaluation workflows, teams can deliver reliable insights while protecting user data. For more details or tailored solutions, feel free to contact us.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!