How do cultural and contextual differences affect evaluation?

Evaluation Methods

Cross-Cultural

Contextual Analysis

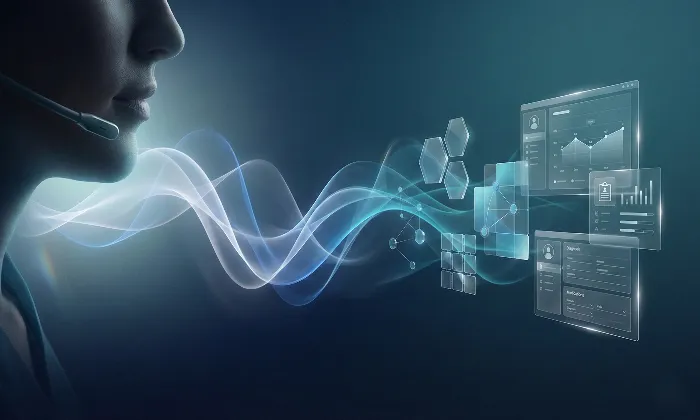

In the world of Text-to-Speech systems, cultural and contextual nuances are not peripheral variables. They directly influence perception, trust, and adoption. A model can be technically precise yet perceptually misaligned if cultural expectations are ignored.

TTS evaluation is not simply about fluency or pronunciation accuracy. It is about whether the generated voice feels appropriate, authentic, and contextually aligned for its intended audience.

Why Cultural Context Shapes Perception

Speech carries cultural expectations about rhythm, emotional intensity, directness, and pacing. A delivery style that feels confident in one region may sound aggressive in another. A neutral tone in one culture may feel disengaged elsewhere.

Perceived naturalness is culturally conditioned. If evaluation panels do not reflect the target audience, ratings can produce false confidence.

For example, an American English voice with restrained intonation may perform well in US-based panels but appear flat to audiences accustomed to more expressive speech patterns. Without region-specific validation, deployment risk increases.

Where Cultural Differences Influence TTS Evaluation

Accent and Dialect Alignment: Regional variation is not cosmetic. Accent mismatch can reduce trust, especially in high-engagement contexts like education, customer service, or public announcements.

Emotional Calibration: Emotional expressiveness varies across cultures. Some contexts prioritize warmth and enthusiasm. Others value restraint and subtlety. Evaluation frameworks must reflect these expectations rather than applying universal scoring standards.

Contextual Tone Fit: Application matters. A children’s learning app requires animation and engagement. A financial reporting system requires stability and authority. Cultural context shapes what “appropriate tone” means in each use case.

Conversational Norms: Pause duration, turn-taking rhythm, and emphasis patterns differ across languages and cultures. These subtle traits influence whether speech feels authentic or artificial.

Practical Implications for Evaluation Design

Cultural alignment must be embedded structurally.

Use native evaluators from target deployment regions

Segment panels by geography and dialect where relevant

Evaluate emotional appropriateness as a distinct attribute

Align prompts with real-world contextual usage

Avoid generalizing results from one region to another

Without these safeguards, evaluation may validate a technically strong model that fails perceptually at scale.

Practical Takeaway

Cultural nuance is not an optimization layer. It is a core evaluation dimension.

Effective TTS validation requires regionally representative panels, context-aware rubrics, and attribute-level diagnostics that separate pronunciation accuracy from emotional authenticity.

At FutureBeeAI, cultural sensitivity is embedded into evaluation design through native speaker panels, regional segmentation, and structured perceptual diagnostics. The objective is not simply intelligible speech. It is speech that resonates within its intended cultural and contextual environment.

A TTS model that ignores cultural nuance may function. A culturally aligned model earns trust.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!