How should model evaluation results be communicated to non-technical teams?

Data Analysis

Business Strategy

Machine Learning

Imagine you’re entrusted with navigating a ship through uncharted waters. The maps you have are filled with intricate details, but the crew, unfamiliar with nautical charts, needs clear directives. This is akin to communicating model evaluation results to non-technical teams. The data is complex, but the message must be clear and actionable. Effective communication in this realm is essential to ensure informed decision-making and optimal use of AI models.

Why Communicating Model Evaluation Matters

Inaccurate communication of model evaluation results can lead to misunderstandings, which may result in misguided business strategies or poor user experiences. Consider this. A study found that 84% of executives have been misled by misunderstood data, underscoring the critical need for clarity. This is not just about translating data but about transforming it into a narrative that resonates across teams. When evaluation insights are communicated properly, everyone understands the model's capabilities and its limitations.

Strategies for Clear and Impactful Communication

Simplify, but Don’t Oversimplify: Focus on key performance indicators (KPIs) that align directly with the model's purpose. In the realm of Text-to-Speech (TTS), for instance, naturalness and intelligibility are critical. Explaining these metrics in terms of their effect on user interaction helps stakeholders understand why they matter without requiring deep technical knowledge.

Harness the Power of Visualization: Visual tools help bridge the understanding gap between technical and non-technical audiences. Graphs and infographics can illustrate trends, comparisons, and outcomes. For example, a comparative bar graph showing improvements in mean opinion scores (MOS) across different model iterations can clearly demonstrate progress.

Connect to Business Outcomes: Evaluation metrics should always be tied to business impact. For example, improved naturalness in a TTS system can increase user engagement, improve product adoption, and strengthen brand trust. When evaluation results are connected to outcomes that matter to leadership, the insights become far more meaningful.

Craft a Compelling Narrative: Data alone rarely resonates. Framing results in a relatable context helps teams understand the significance of evaluation outcomes. For instance, explaining that a TTS system sounds natural enough to feel like a real conversation helps stakeholders visualize the user experience rather than just seeing abstract numbers.

Encourage Dialogue and Interaction: Creating space for questions and discussion allows non-technical teams to clarify uncertainties and contribute valuable perspectives. This collaborative approach often reveals use cases or risks that purely technical evaluations might overlook.

Practical Takeaway

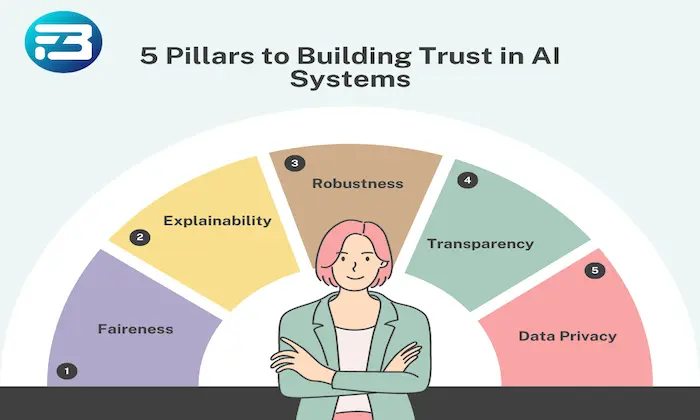

Effective communication of model evaluation results requires clarity, relevance, and engagement. By simplifying metrics, using visualization, connecting insights to business outcomes, building narratives, and encouraging dialogue, teams can better understand evaluation results and make confident decisions.

Platforms like FutureBeeAI help organizations streamline evaluation workflows and communication across teams. This ensures that insights from model evaluation translate into meaningful improvements in model performance and user experience.

Communicating model evaluation results effectively can be the difference between a team that navigates with confidence and one that struggles to interpret complex data. Clear communication ensures that evaluation insights guide the right decisions and help teams build AI systems that perform reliably in real-world environments.

FAQs

Q. Why is it difficult for non-technical teams to understand model evaluation results?

A. Model evaluation often relies on specialized metrics and technical terminology. Without proper explanation or context, these details can become confusing for non-technical stakeholders who are more focused on business impact than technical implementation.

Q. How can technical teams make evaluation results easier to understand?

A. Technical teams can improve understanding by focusing on key metrics, using clear visualizations, explaining results through real-world examples, and connecting model performance directly to business outcomes. These approaches make evaluation insights more accessible and actionable.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!