Why are spreadsheets and ad-hoc listening tests not enough for TTS evaluation?

TTS

Evaluation

Speech AI

In Text-to-Speech (TTS) evaluation, many teams still rely on spreadsheets and informal listening tests to judge model quality. While these methods may appear convenient, they often fail to capture the complexity of how users actually experience synthetic speech. As TTS systems become more integrated into real-world applications, evaluation approaches must move beyond simple tools and ad-hoc processes.

A structured evaluation framework is necessary to accurately assess speech quality and guide meaningful model improvements.

Limitations of Spreadsheet-Based Evaluation

Spreadsheets are commonly used to record evaluation scores, but they often reduce complex perceptual judgments into simple numerical values. While metrics such as Mean Opinion Score (MOS) provide a quick overview of perceived quality, they cannot fully capture the nuances of speech perception.

Important attributes such as naturalness, emotional tone, and conversational rhythm may be hidden behind aggregated scores. As a result, teams may overlook subtle quality issues that significantly affect user experience.

This becomes particularly important when evaluating speech systems trained on large TTS datasets, where small perceptual differences can influence how users perceive the system.

Challenges with Ad-Hoc Listening Tests

Informal listening tests introduce additional reliability challenges. Without standardized evaluation conditions, results may vary widely depending on environmental factors or evaluator context.

Factors such as background noise, device quality, evaluator fatigue, or personal bias can influence judgments. When evaluation conditions are inconsistent, it becomes difficult to determine whether differences in scores reflect true model performance or external influences.

These inconsistencies reduce the reliability of evaluation results and make it harder to compare model versions objectively.

The Risks of Incomplete Evaluation

When evaluation processes rely on superficial methods, models may appear successful during testing while failing to meet real-world expectations.

For example, a speech system might perform well in controlled lab tests yet struggle when deployed in real user environments. If evaluation fails to capture issues such as unnatural prosody or emotional mismatch, users may perceive the system as robotic or unreliable.

This gap between laboratory evaluation and real-world performance can lead to user dissatisfaction and increased operational costs.

Building a Structured TTS Evaluation Framework

Stage-based evaluation: Evaluation should evolve throughout the model lifecycle. Early testing may focus on identifying major issues, while later stages involve comprehensive pre-deployment testing and ongoing monitoring.

Native evaluator involvement: Native speakers can identify pronunciation and contextual errors that automated metrics or non-native evaluators may miss.

Attribute-wise evaluation: Evaluating specific attributes such as naturalness, prosody, pronunciation accuracy, and emotional tone provides clearer insights than relying on single aggregated scores.

Standardized testing environments: Consistent evaluation conditions help ensure that results reflect model performance rather than environmental variables.

Practical Takeaway

Effective TTS evaluation requires more than spreadsheets and informal listening sessions. Speech quality is shaped by complex perceptual factors that require structured methodologies and controlled evaluation processes.

By implementing stage-based evaluation, structured attribute analysis, and diverse evaluator panels, organizations can gain a more accurate understanding of how their systems perform in real-world scenarios.

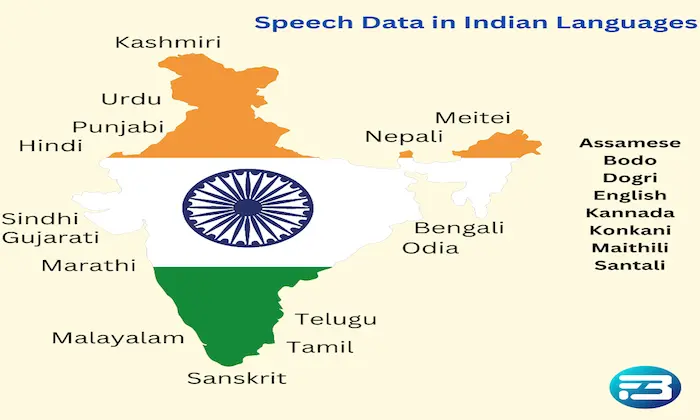

At FutureBeeAI, evaluation frameworks combine structured methodologies with human listening evaluation to ensure speech systems meet both technical and perceptual quality standards. This approach helps organizations deliver reliable speech experiences using high-quality TTS speech datasets and comprehensive speech data collection strategies.

Organizations interested in strengthening their evaluation processes can explore more details or connect through the FutureBeeAI contact page.

FAQs

Q. Why are spreadsheets insufficient for evaluating TTS systems?

A. Spreadsheets reduce complex perceptual judgments to simple numerical scores. While useful for recording data, they cannot capture nuanced speech qualities such as naturalness, prosody, or emotional tone.

Q. What improves the reliability of TTS evaluations?

A. Structured evaluation frameworks, standardized testing environments, attribute-based assessment, and diverse human evaluators significantly improve the reliability of TTS evaluation results.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!