What challenges arise when scaling human TTS evaluation?

TTS

Quality Control

Speech AI

Scaling human evaluation for Text-to-Speech systems is not a linear exercise. Increasing evaluator volume without process discipline amplifies noise, inconsistency, and operational strain. As evaluator count grows, coordination complexity increases disproportionately.

Without structured design, scale introduces variability that erodes reliability rather than strengthening it.

Core Challenges in Scaling Human Evaluation

Evaluator Consistency Drift: As panels expand, interpretation of attributes such as naturalness, prosody, and emotional alignment begins to diverge. Small perceptual differences become exaggerated when scoring standards are not tightly calibrated.

Subjectivity Amplification: Human perception varies by culture, listening context, and personal expectation. Scaling multiplies perceptual variance unless controlled through structured rubrics and calibration sessions.

Onboarding Variability: Rapid evaluator recruitment often reduces training depth. Insufficient onboarding leads to inconsistent application of evaluation criteria.

Operational Overhead Growth: Quality assurance, task distribution, metadata logging, and disagreement resolution become exponentially more complex at scale.

Fatigue and Attention Risk: Large-scale evaluation campaigns increase cognitive load. Without session controls, fatigue bias can distort results.

Structural Strategies for Sustainable Scaling

Standardized Attribute-Wise Rubrics: Break evaluation into defined dimensions such as intelligibility, prosody, pronunciation accuracy, and emotional appropriateness. Structured criteria reduce interpretive drift.

Calibration Sessions and Benchmark Anchors: Regular alignment exercises ensure evaluators apply scoring standards consistently. Controlled reference samples stabilize judgment thresholds.

Layered Quality Control Architecture: Implement primary evaluation, secondary audit sampling, and statistical anomaly detection. This multi-tier system prevents systemic bias accumulation.

Controlled Diversity Deployment: Diversity in listener panels improves representational fairness, but must be managed through consistent task framing and shared evaluation definitions.

Performance Monitoring and Feedback Loops: Track evaluator agreement rates, rating variance, and completion behavior to identify drift early.

Session Design Optimization: Limit task length and rotate sample order to reduce fatigue-induced bias.

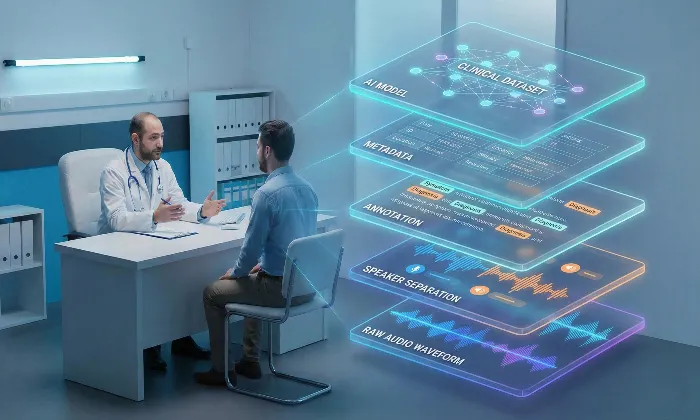

The Role of Structured Infrastructure

At FutureBeeAI, scalable evaluation frameworks integrate structured rubrics, controlled onboarding, audit layers, and metadata discipline to maintain perceptual consistency across expanding evaluator networks. Scaling becomes an engineered system rather than a volume expansion exercise.

Practical Takeaway

Scaling human TTS evaluation requires infrastructure, not just headcount. Consistency control, structured criteria, calibration discipline, and layered quality assurance are essential to preserve reliability at volume.

When designed correctly, scale enhances confidence rather than diluting signal quality.

To build a scalable, precision-driven human evaluation framework for your TTS systems, connect with FutureBeeAI and strengthen your evaluation operations with structural rigor and perceptual stability.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!