What questions should we ask before onboarding an evaluation partner?

Evaluation Methods

Business Strategy

Partnership Management

Selecting the right AI evaluation partner is an important decision that can significantly influence the success of your model development process. Evaluation partners help determine whether models are ready for deployment, identify hidden weaknesses, and ensure systems perform reliably in real-world conditions.

For teams building systems such as Text-to-Speech models, choosing a partner with the right expertise and evaluation infrastructure is essential for generating reliable insights and improving model performance.

1. Are Your Evaluation Goals Clearly Defined?

Before selecting a partner, it is important to clarify what you want to achieve through evaluation. Some teams focus on improving model robustness, while others prioritize user experience attributes such as naturalness, clarity, or emotional tone.

For example, evaluating a TTS model requires focusing on perceptual attributes such as prosody and intelligibility rather than relying only on technical metrics. A strong evaluation partner should be able to align evaluation strategies with your specific goals and product requirements.

2. What Evaluation Methodologies Do You Use?

Evaluation methodologies determine how model performance is assessed. Different stages of development require different approaches.

Initial testing may use general metrics such as Mean Opinion Score to gauge overall quality.

Model comparisons may rely on paired evaluations or A/B testing to identify subtle differences between models.

Detailed analysis often involves attribute-level scoring for factors such as pronunciation accuracy, rhythm, and emotional tone.

An effective evaluation partner should be capable of applying multiple methodologies depending on the stage of the development cycle.

3. How Do You Handle Evaluator Subjectivity and Disagreement?

Human evaluation inevitably involves subjective perception. Evaluator disagreements can reveal valuable insights about model weaknesses or ambiguous evaluation criteria.

A strong evaluation partner should have structured processes for analyzing evaluator disagreements rather than dismissing them. These insights can help teams understand user perception differences and refine evaluation frameworks.

4. What Quality Assurance Processes Are Implemented?

Reliable evaluations depend on strong quality control mechanisms. Evaluation partners should implement multiple layers of quality assurance to ensure evaluator reliability and data integrity.

Common practices include attention checks, evaluator calibration sessions, and regular quality reviews. These processes help maintain consistency and reduce the risk of inaccurate evaluations.

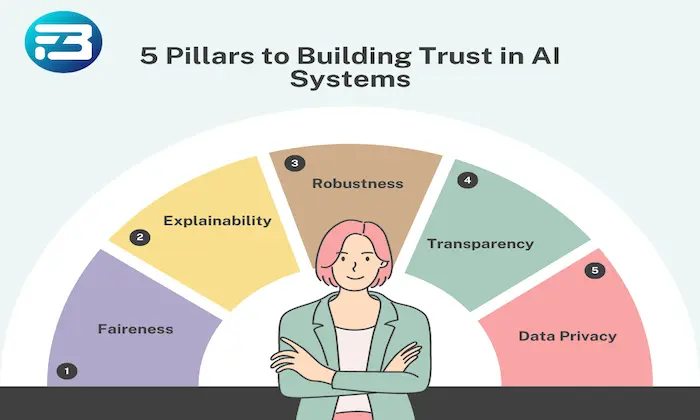

5. How Do You Ensure Transparency and Traceability?

Evaluation transparency is essential for building trust in the results. Organizations should understand how evaluation sessions are documented and tracked.

Evaluation partners should provide clear records of who performed the evaluation, when it occurred, and under what conditions. Detailed metadata tracking allows teams to reproduce results and investigate inconsistencies when necessary.

Practical Takeaway

Choosing an AI evaluation partner requires more than technical capability. Organizations should ensure that their partner aligns with their evaluation goals, supports multiple methodologies, manages evaluator subjectivity effectively, maintains strong quality assurance processes, and provides transparent evaluation documentation.

By asking the right questions early, teams can establish partnerships that support reliable evaluation workflows and help ensure models perform effectively in real-world applications.

Organizations developing large-scale speech systems often rely on structured evaluation platforms and curated datasets such as those provided by FutureBeeAI to support consistent and scalable evaluation processes.

FAQs

Q. What evaluation methods are most useful for TTS models?

A. Methods such as Mean Opinion Score, paired comparisons, and attribute-level scoring help evaluate perceptual qualities such as naturalness, prosody, and emotional tone.

Q. How can organizations verify the reliability of an evaluation partner?

A. Organizations should review the partner’s evaluation methodologies, training procedures for evaluators, quality assurance processes, and documentation practices to ensure evaluations remain accurate and transparent.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!