What does it mean for a model to “break gracefully”?

AI Models

Software Development

Machine Learning

In AI and machine learning systems, performance is often treated as a binary outcome: the model works or it fails. In reality, the most reliable systems are designed to fail gracefully rather than collapse entirely. When a model encounters unexpected inputs or edge cases, graceful degradation protects both the user experience and the integrity of the system.

Instead of a sudden breakdown, a well-designed system responds with controlled behavior. Think of it like a parachute deploying during a fall. The situation is not ideal, but the landing remains safe and manageable.

The Importance of Graceful Failure in AI Systems

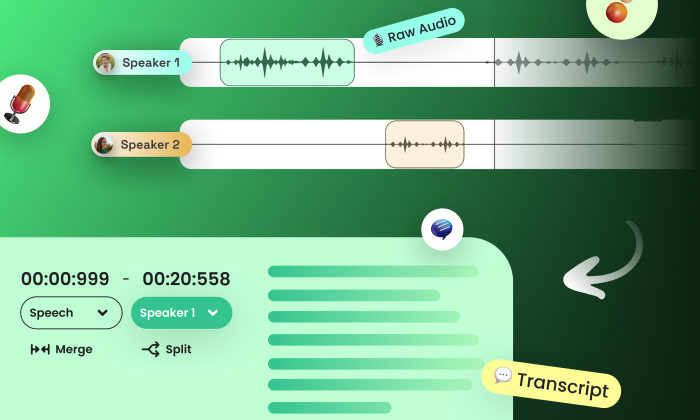

Graceful failure is especially important in systems such as text-to-speech (TTS) models, where users interact directly with AI outputs. When models encounter unfamiliar inputs or edge conditions, they should degrade in performance without disrupting the entire interaction.

User Experience Protection: Controlled degradation prevents sudden interruptions. Instead of crashing or producing unusable outputs, the system maintains functional communication.

Insight Generation: Failure cases reveal weaknesses in the model. These insights help engineers refine training data, evaluation methods, and system design.

Trust Preservation: Users remain confident in systems that handle errors smoothly. A system that manages mistakes gracefully appears reliable even under imperfect conditions.

How Graceful Failures Appear in TTS Systems

Consider a TTS system encountering a rare or unfamiliar word. A catastrophic failure might produce unintelligible speech or stop processing entirely. A graceful failure, however, might slightly mispronounce the word while still conveying the intended message.

The output remains understandable, and the model highlights a scenario where further improvement is needed.

Strategies for Designing Graceful Failure Mechanisms

Error Anticipation: Systems should include fallback behaviors when encountering unfamiliar inputs. These mechanisms allow models to recover or simplify outputs rather than producing corrupted responses.

Comprehensive Testing: Evaluation pipelines must include rare edge cases and diverse usage scenarios. Stress-testing models before deployment reveals potential failure points.

Continuous Monitoring: Post-deployment monitoring ensures early detection of subtle performance degradation. Combining automated metrics with human evaluation improves detection of perceptual issues.

Common Pitfalls in Failure Management

Neglecting Edge Cases: Many systems are optimized for common scenarios but remain fragile when encountering unusual inputs.

Weak Monitoring Systems: Without ongoing evaluation, small performance issues can evolve into larger failures over time.

Over-Reliance on Metrics: Quantitative metrics often miss perceptual issues such as unnatural speech flow or incorrect tone. Human evaluation remains essential.

Practical Takeaway

AI systems should not be designed only for ideal conditions. They should also be engineered to handle unexpected inputs without breaking the user experience.

Effective resilience strategies include:

Designing fallback behaviors for unexpected inputs: Ensuring models maintain usable outputs even in failure scenarios.

Testing models against diverse real-world scenarios: Stress-testing systems with edge cases and uncommon inputs.

Maintaining continuous monitoring and evaluation: Detecting performance drift and subtle failures early.

Organizations seeking to build resilient AI systems often rely on structured evaluation frameworks such as those offered by FutureBeeAI. If you want to strengthen your model evaluation pipelines and improve system reliability, you can contact us to explore tailored evaluation strategies.

FAQs

Q. What distinguishes graceful failure from catastrophic failure?

A. Graceful failure allows the system to degrade performance in a controlled way while continuing to function. Catastrophic failure results in a complete system breakdown that disrupts user interaction.

Q. How can AI teams monitor graceful failure in production?

A. Teams should combine automated monitoring with human evaluation. Tracking edge-case behavior, reviewing user feedback, and running periodic evaluation cycles help detect failures before they escalate.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!