At what scale does internal TTS evaluation break down?

TTS

Evaluation

Speech AI

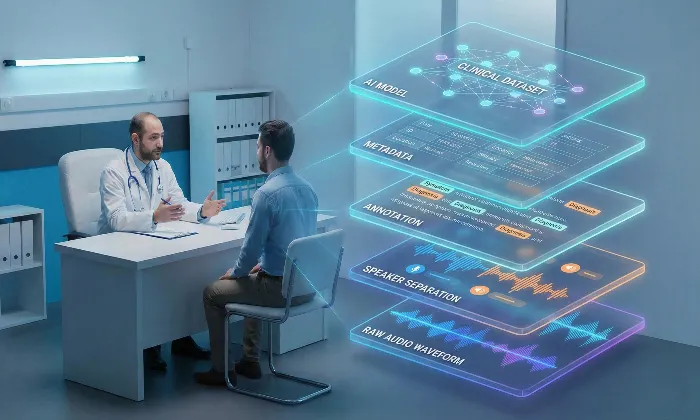

As Text-to-Speech systems evolve from small prototypes to large production systems, the internal evaluation processes that once worked reliably often begin to break down. This shift typically happens when datasets grow larger and model architectures become more complex. At this stage, automated metrics and small internal review teams struggle to capture the full range of human perception. For teams working with expanding speech datasets, evaluation strategies must evolve alongside model complexity.

Internal testing environments often fail to reflect the diversity and unpredictability of real-world user interactions. As a result, models that perform well in controlled internal testing may still struggle when deployed to actual users. This gap highlights the importance of rethinking evaluation strategies as systems scale.

The Challenges of Scaling TTS Evaluation

Scaling evaluation introduces a key challenge: evaluation methods that worked well during early development often become insufficient once the system reaches production scale.

Automated metrics may detect technical improvements, but they often fail to capture perceptual qualities such as naturalness, conversational rhythm, or emotional resonance. As models grow more sophisticated, these perceptual attributes become increasingly important in determining whether a system meets user expectations.

The Pitfalls of Internal Evaluation

Limited Listener Diversity: Internal evaluation groups are often small and composed primarily of engineers or technical staff. While these evaluators are effective at identifying technical issues, they may not represent the broader user population. This limitation can cause teams to overlook cultural, linguistic, or perceptual differences in how synthesized speech is received.

Overreliance on Automated Metrics: Metrics such as Mean Opinion Score provide useful signals but cannot fully capture how speech feels to listeners. A TTS model may achieve strong automated scores while still producing speech that sounds emotionally flat or awkward in real-world usage.

Evaluator Fatigue: As evaluation workloads increase, evaluators may experience fatigue that affects judgment quality. Listening to large numbers of samples in rapid succession can reduce sensitivity to subtle speech quality issues, resulting in inconsistent or rushed feedback.

Strategies to Improve Evaluation at Scale

Diverse Evaluator Panels: Including evaluators from different linguistic, cultural, and demographic backgrounds allows teams to capture a wider range of user perceptions. Diverse panels help identify issues that homogeneous evaluation groups may miss.

Layered Evaluation Frameworks: Combining automated metrics with human evaluation produces more reliable insights. Automated screening can detect obvious technical errors, while trained human evaluators assess perceptual attributes such as naturalness, emotional tone, and conversational flow.

Continuous Monitoring and Feedback Loops: Evaluation should continue after deployment. Collecting user feedback and periodically re-evaluating models helps teams detect subtle performance regressions or shifts in user expectations.

Practical Takeaway

Expand Evaluator Diversity: Engage evaluators from varied backgrounds to capture a broader range of user perspectives.

Use Layered Evaluation Methods: Combine automated metrics with structured human evaluation to capture both technical and perceptual performance.

Monitor Models After Deployment: Implement continuous evaluation processes to detect performance drift or silent regressions over time.

Conclusion

Scaling TTS systems requires evaluation strategies that go beyond internal testing environments. By addressing the limitations of internal evaluation, teams can develop speech systems that better reflect real-world user expectations.

Organizations such as FutureBeeAI provide structured evaluation frameworks designed to support large-scale testing, diverse evaluator participation, and continuous monitoring. These approaches help ensure that speech systems remain reliable as they move from controlled testing environments to real-world deployment.

If your team is expanding TTS systems at scale, you can also explore FutureBeeAI’s AI data collection services to support more robust evaluation and dataset management workflows.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!