Why do internal engineering teams often miss TTS quality issues?

TTS

Engineering

Speech AI

In the realm of Text-to-Speech (TTS) systems, engineering teams often miss critical quality issues because they rely too heavily on automated metrics. While metrics offer speed and scalability, they fail to capture the perceptual nuances that define real user experience. In TTS, success is not just about clarity or high scores. It is about how the voice feels to a listener.

Why Metrics Alone Are Not Enough

At first glance, TTS evaluation seems straightforward. Metrics like MOS and intelligibility scores provide a sense of performance. However, these metrics compress complex human perception into simplified numbers.

This creates a gap where models appear strong in evaluation but fail in real-world scenarios. The missing layer is human perception, which captures subtle issues that metrics cannot quantify.

Hidden Quality Issues Teams Often Miss

Many issues remain undetected until real users interact with the system:

Naturalness Gaps: Speech is clear but still sounds robotic

Prosody Errors: Incorrect rhythm and stress disrupt flow

Emotional Mismatch: Tone does not align with context

Awkward Pauses: Poor timing makes speech feel unnatural

Inconsistency: Variation across outputs reduces reliability

Common Pitfalls in TTS Evaluation

Over-Reliance on Automated Metrics: High scores do not guarantee natural or engaging speech

Lack of Diverse Evaluators: Missing native speakers or domain experts leads to blind spots

Ignoring Silent Regressions: Model updates may degrade quality without affecting headline metrics

Real-World Example

Consider a TTS system built for healthcare applications. It may pass all technical checks but still feel emotionally disconnected to patients. In sensitive domains, this gap directly impacts trust and usability.

How to Strengthen TTS Quality Evaluation

Layered Evaluations: Combine automated metrics with human assessments

Attribute-Based Analysis: Evaluate naturalness, prosody, pronunciation, and emotional tone separately

Diverse Evaluator Pools: Include native speakers and domain experts

Continuous Monitoring: Use sentinel datasets and periodic reviews to detect regressions

Real-World Testing: Validate performance in actual usage scenarios

Practical Takeaway

TTS quality is defined by perception, not just performance metrics. Teams that rely only on automated evaluation risk deploying models that fail in real-world conditions. By integrating human-centered evaluation and continuous monitoring, hidden issues can be identified and resolved early.

FAQs

Q. What are the essential attributes to evaluate in TTS systems?

A. Focus on naturalness, pronunciation accuracy, prosody, emotional appropriateness, and consistency across different contexts.

Q. How can teams improve TTS quality control?

A. Use a layered evaluation approach that combines metrics with human feedback, involves diverse evaluators, and applies continuous monitoring to catch regressions early. For more support, explore continuous monitoring techniques or get in touch with our team.

What Else Do People Ask?

Related AI Articles

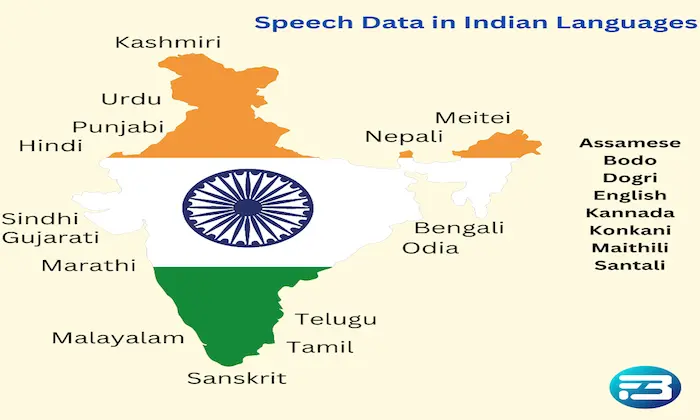

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!