How do you evaluate coarticulation errors in TTS?

TTS

Speech Synthesis

Speech AI

In Text-to-Speech (TTS) evaluation, listening tasks play a central role in connecting model performance with real user perception. While automated metrics measure technical accuracy, listening tasks reveal how speech actually feels to human listeners.

These tasks evaluate qualities such as naturalness, prosody, clarity, and emotional appropriateness. When designed correctly, they help teams uncover subtle issues that purely technical metrics might overlook.

Why Listening Tasks Matter in TTS Evaluation

Listening tasks are structured exercises where human evaluators assess speech outputs based on specific attributes. They allow teams to capture human perception directly, which is essential in speech systems where user experience determines success.

Poorly designed listening tasks can produce misleading results. A voice might seem acceptable during testing but reveal robotic pacing or unnatural stress patterns once deployed. Effective listening tasks expose these issues early in the development process.

Key Principles for Designing Listening Tasks

1. Start with Clear Evaluation Objectives: Every listening task should be designed around a specific evaluation goal. Different attributes require different task designs.

Naturalness may be evaluated through paired comparisons

Prosody and emotional tone may require attribute-wise scoring

Pronunciation accuracy may require focused listening tasks

Clear objectives ensure evaluators understand what aspect of speech they should assess.

2. Use Diverse Prompt Sets: Evaluation prompts should represent the range of scenarios the model will encounter in real-world use.

This can include:

Conversational sentences

Formal announcements

Domain-specific terminology

Emotional or expressive dialogue

A diverse prompt set helps test how well the model adapts across contexts and speaking styles.

3. Involve Native Evaluators: Native speakers provide critical insight into pronunciation accuracy, rhythm, and cultural nuance. They are more likely to detect subtle issues such as incorrect stress placement or unnatural phrasing.

Their feedback helps ensure that speech outputs feel authentic to the intended audience.

4. Implement Multi-Layer Quality Control: High-quality listening evaluations include structured quality assurance processes.

These may involve:

Attention checks to maintain evaluator focus

Secondary reviews of evaluation results

Monitoring evaluator consistency across sessions

Multi-layer checks ensure that evaluation results remain reliable.

How Listening Tasks Fit Into the Model Lifecycle

Listening tasks should evolve alongside the model during different development stages.

Prototype Phase: Small listener panels help identify major speech issues quickly and guide early experimentation.

Pre-Production Phase: Tasks become more structured, focusing on detailed attributes such as prosody, pronunciation accuracy, and emotional tone.

Production Readiness: Larger evaluation panels and statistical methods such as confidence intervals help confirm that the model meets quality thresholds.

Post-Deployment Monitoring: Periodic listening evaluations detect silent regressions and ensure that model updates do not degrade user experience.

Practical Takeaway

Listening tasks form the foundation of reliable TTS evaluation. By designing tasks with clear objectives, diverse prompts, native evaluators, and structured quality checks, teams can capture meaningful insights into how speech systems perform in real-world conditions.

Organizations such as FutureBeeAI specialize in building structured listening evaluation frameworks and providing access to diverse evaluator panels. These approaches help ensure that TTS models deliver speech that is not only technically accurate but also natural and engaging for users.

Well-designed listening tasks ultimately help teams move beyond basic testing and build speech systems that genuinely resonate with their audiences.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

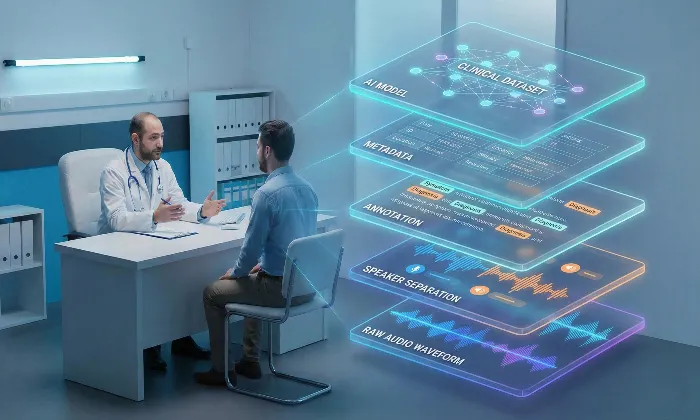

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!