How much does crowd-based TTS evaluation typically cost?

TTS

Cost Analysis

Speech AI

Crowd-based evaluation plays an important role in assessing the quality of Text-to-Speech (TTS) systems. These evaluations rely on human listeners to judge aspects of speech such as naturalness, pronunciation, and emotional tone, areas where automated metrics often fall short. Because they involve human evaluators, structured tasks, and quality control processes, the cost of these evaluations can vary widely depending on project requirements.

For organizations working with advanced TTS models, understanding the cost drivers behind crowd evaluations helps in planning evaluation strategies effectively.

Typical Cost Range for Crowd-Based Evaluations

The cost of crowd-based TTS evaluation generally ranges from a few thousand dollars to tens of thousands, depending on the scale and complexity of the evaluation.

Small-scale evaluations: Early-stage prototype testing may involve small listener panels and basic tasks such as Mean Opinion Score (MOS) ratings. These evaluations often cost around $2,000–$5,000 and provide preliminary feedback on model quality.

Large-scale evaluations: When models approach production readiness, evaluation efforts expand significantly. Large listener panels, structured evaluation tasks, and deeper analysis can increase costs to $10,000 or more, especially for multilingual or complex speech systems.

Key Factors That Influence Evaluation Costs

Evaluation scale: The number of speech samples and the size of the evaluator panel directly affect total cost. Larger evaluations require more listening time and coordination.

Evaluator compensation: Crowd evaluators are typically paid either per task or per hour. Ensuring fair compensation is essential for maintaining evaluator engagement and reliable results.

Evaluation methodology: Simpler evaluation methods like MOS are relatively inexpensive, while more detailed approaches such as attribute-based evaluations or paired comparisons require additional training and supervision.

Quality assurance processes: Reliable evaluations often include multiple quality control layers such as attention checks, evaluator monitoring, and secondary reviews. These processes improve reliability but increase operational costs.

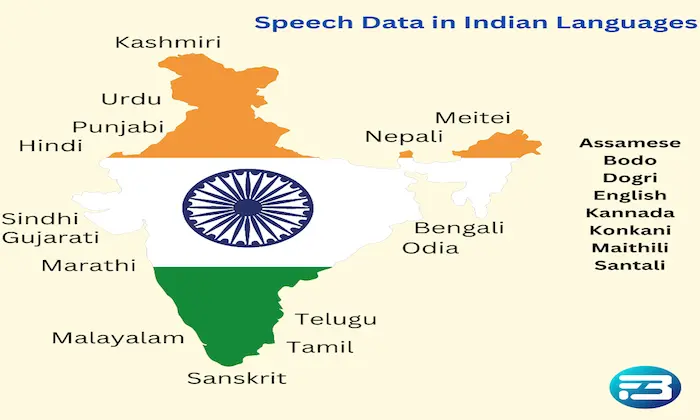

Dataset diversity requirements: Projects requiring evaluations across multiple accents, languages, or demographics often require larger and more diverse evaluator pools. This complexity can increase overall evaluation expenses.

Why Investing in Proper Evaluation Matters

Underestimating the importance of evaluation can lead to misleading results and costly product issues later. A model that performs well in limited testing might fail to meet user expectations once deployed, particularly if the evaluation panel lacked diversity or sufficient scale.

Using diverse evaluator panels and representative speech samples helps ensure that results reflect real-world user experiences. Access to high-quality speech datasets also improves the reliability of both training and evaluation processes.

Practical Takeaway

Crowd-based TTS evaluation is not just a cost, it is a strategic investment in product quality. The right evaluation framework helps teams detect subtle speech issues, validate improvements, and ensure models perform reliably for diverse users.

Organizations developing large-scale speech systems often rely on structured evaluation workflows and specialized platforms such as FutureBeeAI to manage evaluator panels, implement quality control, and streamline testing processes. These systems help teams conduct efficient evaluations while maintaining high reliability.

By carefully balancing evaluation scale, methodology, and quality control, teams can manage costs effectively while ensuring their TTS systems deliver natural and trustworthy voice experiences.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!

-data-collection/thumbnails/card-thumbnail/top-resources-to-gather-speech-data-for-speech-recognition-model-building.webp)