Physical AI

Training Data

What Is Physical AI? Why the Next Wave of AI Is Moving from Screens into the Physical World

Physical AI doesn't just respond, it acts in the physical world. Here's what's changing, why now and what it means.

Physical AI

Training Data

Physical AI doesn't just respond, it acts in the physical world. Here's what's changing, why now and what it means.

Every AI system that has transformed the past decade, the language models, the image classifiers, the recommendation engines, the code generators share one fundamental property. They take inputs and return information. You ask a question and get an answer. You show an image and get a label. You describe a problem and get a solution. The output is always text, numbers, classifications, predictions. The output lives inside the screen.

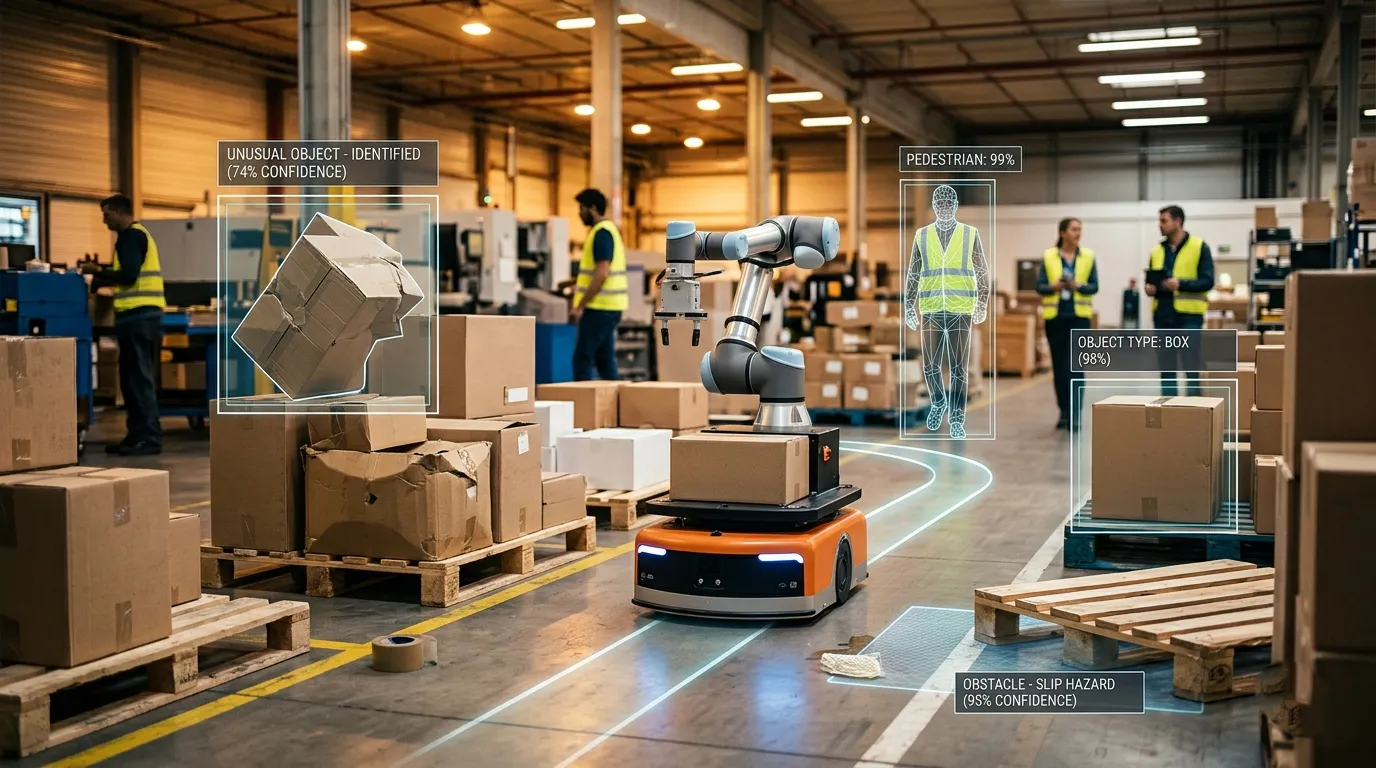

Physical AI changes that sentence. These systems take inputs and return action. The robotic arm picks up the object and places it precisely where it needs to go. The autonomous vehicle detects a pedestrian and adjusts its path. The factory inspection system identifies a hairline crack and flags the component before it reaches assembly. The output doesn't stay on the screen, it moves something, navigates somewhere, completes a physical task in a world that pushes back.

That shift from "information" to "action" is the entire story of why Physical AI represents something genuinely new, rather than just the next increment of what AI was already doing. And it changes what you need to build these systems including what kind of training data they require.

Robotic systems have existed for decades in manufacturing, surgery and logistics. The question that comes up when people first encounter Physical AI is reasonable: what's actually new?

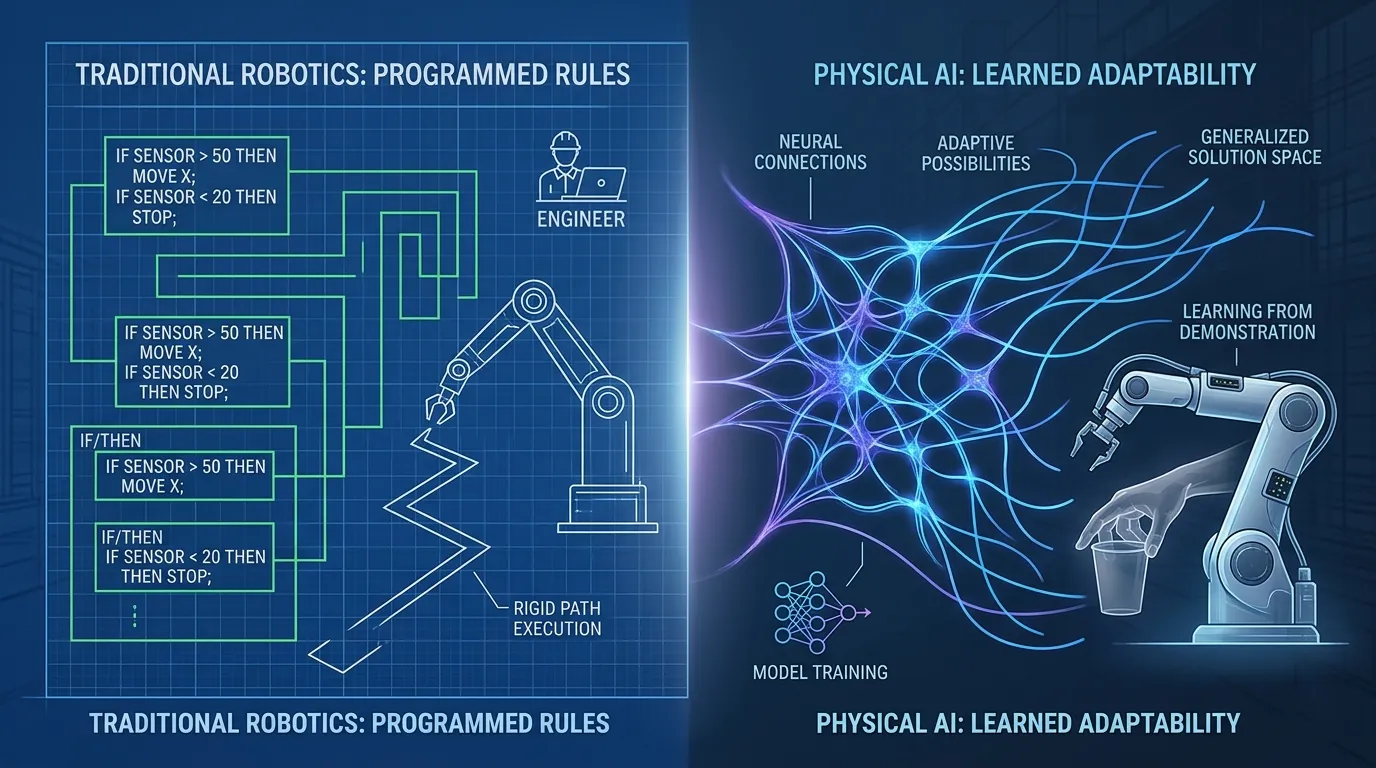

The difference is in how intelligence was produced. Traditional robotics was programmed. Engineers wrote explicit rules for every behavior, if the sensor reads value X, move the arm to position Y, apply force Z. The intelligence was in the programming, and the programming was specific. Change the object's position slightly, change the lighting, change the task and you might be outside the programmed rules entirely.

Physical AI systems are trained. They see demonstrations. They process sensor data from real environments. They develop the capability to handle variation that no one explicitly planned for. A Physical AI system for warehouse manipulation doesn't have a rule for every possible object position, it has learned from enough examples that it can reason about positions it hasn't seen before. That generalization is the new capability.

It also changes what's required to build them. Programmed robots needed engineering. Trained Physical AI systems need data specifically, training data that reflects the range of situations the system will actually encounter. Which brings us to where this is already operating.

Physical AI is not an emerging technology in the sense of something that will arrive in a few years. Deployed systems are operating across industries now, at production scale.

In logistics and warehousing, Physical AI systems handle variable inventory objects of different sizes, weights and orientations that no fixed programming could anticipate in advance. In healthcare, surgical assistance platforms use Physical AI to navigate anatomical variation between patients, adjusting in ways that explicit programming couldn't accommodate for the full range of human anatomy. In agriculture, physical systems scout fields, identify crop conditions and intervene with precision that broad-application methods can't match. In mobility, autonomous vehicle systems interpret environments they've never encountered before, making decisions about unstructured situations in real time.

In regions with less structured road infrastructure where lane markings are inconsistent, where mixed traffic includes vehicles, pedestrians, and animals without clear separation. Physical AI systems face conditions that Western-lab training data doesn't prepare them for. A system that works reliably in California may need fundamentally different training data to work in Mumbai or Lagos. The environment determines performance at least as much as the model architecture does.

The common thread across all of these deployments: they became practical recently, and the reason they did is not random.

Physical AI has been theoretically possible for a long time. The question worth asking is why it became deployable at scale in 2024 and 2025 in a way it hadn't been in 2015 or 2018.

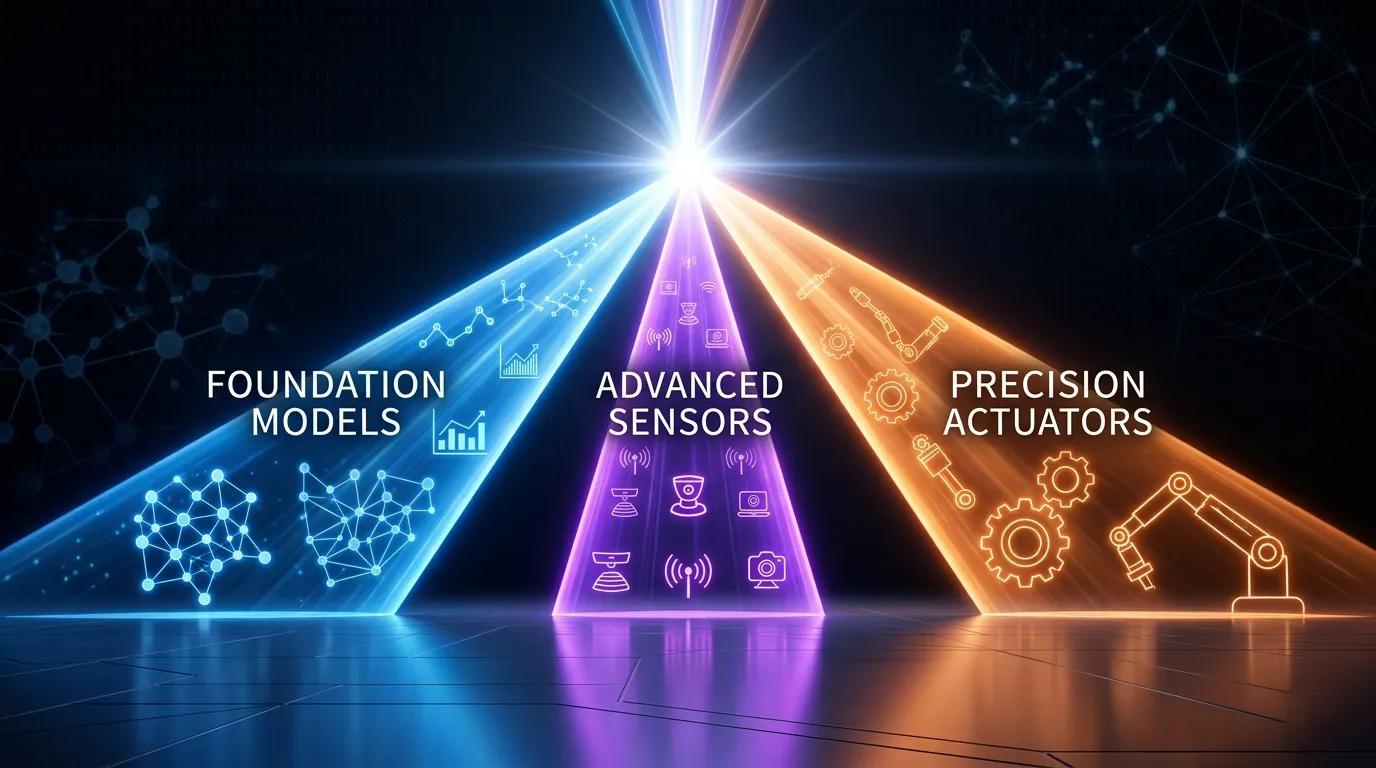

Three things converged. Foundation models large AI models trained on broad datasets became capable enough to be adapted to physical domains without being built from scratch for each application. Before this, every Physical AI application required a custom model developed from the ground up. Foundation model adaptation lowered that threshold significantly.

At roughly the same time, sensor technology crossed a cost and accuracy threshold that made data collection at scale viable. High-resolution cameras, LiDAR, force and tactile sensors became accurate enough to generate useful training data and cheap enough to deploy across large collection operations.

An Actuator precision is the ability of physical systems to actually execute what trained models specify, improved to the point where the gap between "what the model recommends" and "what the hardware can do" narrowed enough to make real-world deployment practical.

The wave isn't happening because of a single breakthrough. It's happening because the enabling infrastructure reached readiness across all three dimensions simultaneously. Each component existed earlier; the combination is what's new. And the combination creates a specific data requirement that didn't exist before.

Digital AI data has a property that Physical AI data doesn't: much of it already existed in usable form. Language models trained on text that was on the internet. Vision models trained on images that were already labeled. The training data collection problem was largely about finding and preparing data that already existed.

Physical AI training data doesn't exist in that form. There is no corpus of robot manipulation demonstrations to crawl, no library of labeled sensor streams from real physical environments. Every dataset has to be deliberately collected, people demonstrating tasks, sensors recording environments, systems operating in conditions that the training needs to reflect.

This means the quality, coverage and diversity of Physical AI training data is the direct product of intentional collection decisions. A system trained with data from one environment, one set of operating conditions, one physical configuration will have exactly those limitations baked into it. A system trained across the range of environments and conditions it will actually encounter can generalize. The difference between those two systems isn't in the model architecture, it's in whether the data was designed for the full deployment reality.

Physical AI systems deployed in linguistically diverse contexts where operators give instructions in different languages, where signage is in multiple scripts, where physical environments reflect different cultural conventions require training data that reflects those contexts. A system that understands spoken instructions in English but not in Hindi, Tamil, or Arabic has a deployment gap that appears the first time a real operator speaks to it in their actual language. Language isn't separate from Physical AI, it's the interface through which people command and correct physical systems.

Physical AI succeeds or fails at the data design stage. The most capable model architecture running on training data that was collected in convenient, controlled conditions will underperform a simpler model trained on data designed to match the deployment reality. This has been the consistent lesson from Physical AI deployments across industries.

FutureBeeAI designs Physical AI training data collections for systems that will operate across the full range of real-world conditions, varied environments, multilingual instruction interfaces, diverse physical operator profiles and the operational conditions that matter in actual deployment rather than controlled testing. In our Physical AI collection projects, the collection design itself often surfaces requirements the client hadn't anticipated, a forklift assistance system that needs to understand both spoken instructions and physical gesture cues from warehouse workers across three languages, or an inspection robot that needs to recognize the same defect pattern across different ambient lighting conditions in facilities across four countries. Getting that range into the training data is what makes the difference between a system that works in the demo and one that works in the field.

If you're building a Physical AI system and want to discuss how to design training data for the actual conditions it will face, talk to the FutureBeeAI team.

Q. What happens when a Physical AI system makes a mistake in the real world?

A. When a Physical AI system makes a mistake, the consequences are physical, a manipulator arm drops a component, a delivery robot collides with an obstacle, a surgical assistant moves to the wrong position. This is the defining difference from software AI errors, which produce bad text or bad recommendations. Physical errors have real-world costs: damaged equipment, halted operations, injury risk. This is why Physical AI development requires extensive simulation testing, staged deployment in controlled environments, and fail-safe mechanisms that digital AI systems don't need. The consequence of acting in the physical world rather than responding in a digital one is that the stakes of each decision are materially higher.

Q. Is autonomous driving a form of Physical AI?

A. Yes, autonomous vehicles are one of the most advanced and widely-known Physical AI systems in operation today. They perceive their environment through sensors (cameras, lidar, radar), make decisions about navigation and hazard response, and take physical action through steering, braking, and acceleration. They also illustrate Physical AI's defining challenge: perception or decision errors produce immediate physical consequences. Autonomous driving also served as the proving ground for much of the sensor fusion and edge inference architecture that newer Physical AI systems now build on. What most people know as self-driving technology is essentially the first large-scale Physical AI deployment.

Q. Do Physical AI systems use large language models?

A. Some do, and it's becoming a more common architecture. Large language models and vision-language models are being used as the reasoning layer in some Physical AI systems, interpreting ambiguous instructions, planning multi-step task sequences, and handling novel situations that require language understanding. But the perception and action layers still require specialized training on physical-world data: sensor readings, physical trajectories, environment-specific conditions. The result is often a hybrid: foundation model reasoning combined with domain-specific physical training. This is different from LLM-only systems because the physical execution layer can't be replaced by language capability alone, a robot that understands the instruction "pick up the fragile item" still needs physical training to execute it correctly.

Q. Does Physical AI require continuous internet connectivity to operate?

A. Not necessarily, and in many industrial and safety-critical deployments, it deliberately doesn't. Physical AI systems running at the edge on the device itself can perceive, decide, and act with low latency without relying on cloud connectivity. Cloud connectivity matters for training updates, fleet-level learning, and monitoring, but the core inference loop often runs locally. This is different from digital AI, where the model typically runs on remote servers and the device is just an interface. For Physical AI, edge deployment is often a requirement rather than an option: a robot that waits for a cloud response before completing a physical action introduces latency that doesn't work in real-time physical environments.

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!