In today's era, we find ourselves in the midst of the Large Language Model revolution. These powerful AI models have taken the world by storm, introducing us to a new era of artificial intelligence that has the potential to transform the way businesses operate. From the GPT series to other cutting-edge models, LLMs have displayed remarkable capabilities in natural language processing tasks. However, despite their growing popularity, many are left wondering how these models acquire new training data and how they undergo the essential process of fine-tuning to reach their full potential.

In this blog, we will demystify the behind-the-scenes workings of large language models, shedding light on the critical aspects of training data and the fine-tuning process. Understanding these fundamental concepts is crucial to appreciate how LLMs evolve and adapt to meet the demands of specific applications, ultimately unleashing their true transformative power in the world of AI. Let's delve into the journey these models take after utilizing internet information and how they continue to learn and improve over time.

What is a Large Language Model?

An artificial intelligence system that is built to process and produce language that is similar to that of humans is referred to as a language model. These models are built using deep learning techniques, particularly using architectures like transformers. They have exceptional abilities to comprehend, interpret, and generate human language.

The term "large" in large language models refers to the vast amount of training data and parameters used in training these models. The larger the model, the more data it can potentially handle, and the more complex and nuanced language tasks it can perform.

These models have a wide range of applications, including natural language processing tasks like content generation, language translation, text summarization, sentiment analysis, language understanding, chatbots, and much more.

The possibility of these models highly depends on the availability of quality training data. Without quality data, these models will not be able to perform user-specific tasks. So, let’s understand quality training data for LLM.

In the next few years, everyone will use their AI models but those who have high-quality data will outperform.

What is High-Quality Training Data?

High-quality training data is essential for the success of any machine learning model. It is the data that the model uses to learn and make predictions. The quality of the training data determines the accuracy and performance of the model.

The data should be relevant, complete, accurate, diverse, and labeled correctly. This will help the model to learn effectively and to generalize to new data.

Especially for language models, we can use different types of training datasets, for example:

Closed Ended Question Answer Training Dataset

A closed-ended question answer dataset is a collection of questions and answers where the answers are limited to a set of predefined choices.

Here's an example of a closed-ended question and its corresponding closed-ended answer:

Artificial Intelligence is a rapidly evolving technology that aims to create intelligent machines capable of performing tasks that typically require human intelligence. These tasks may include problem-solving, learning, speech recognition, and decision-making. AI is being employed in various industries, such as healthcare, finance, and transportation, to enhance efficiency and productivity. As AI continues to advance, it has the potential to revolutionize the way we live and work.

Closed-ended question:

"Is AI used in the healthcare industry?"

a) Yes

b) No

In this example, the question allows for a simple "yes" or "no" response, which makes it a closed-ended question. The answer is limited to a specific choice and does not require the respondent to provide any additional information or elaborate further.

Closed-ended question-answer datasets provide clear and unambiguous training examples, making it easier for the model to learn the mapping between questions and their corresponding answers.

Open-Ended Question Answer Training Dataset

An open-ended question-answer dataset is a collection of questions and answers where the answers are not limited to a set of predefined choices.

Here's an example of an open-ended question-answer pair:

Question: "When was Abraham Lincoln born?"

Answer: "Abraham Lincoln was born on February 12, 1809."

This example is an open-ended question, meaning it does not have a predetermined set of possible answers. Instead, the model needs to comprehend the question, reason about it, and generate an answer in natural language. The answers can vary in length and complexity, requiring the model to have a deeper understanding of the context and knowledge of the subject matter.

Similarly, we can prepare high-quality training datasets for use cases like sentiment analysis, summarization, intent analysis, etc.

A dataset with proper labeling, varieties, and use case-specific terminologies, can be used to fine-tune pre-trained models like ChatGPT, Bard, etc. But using high-quality training data alone can not make a language model better for business use. We need to do proper evaluation in fine-tuning the model to get the desired output and for this, we need humans in the loop to check the outputs of the model.

Fine Tuning with Humans in The Loop: Evaluation, Rating, and Validation of Language Model Output

Fine tuning with humans in the loop (HITL) is a technique for improving the performance of language models by using human feedback to guide the training process. In HITL, human annotators or domain experts linguists are asked to evaluate the output of the language model and provide feedback on its accuracy, relevance, and fluency. This feedback is then used to train the language model, which helps it to learn to generate more accurate, relevant, and fluent text.

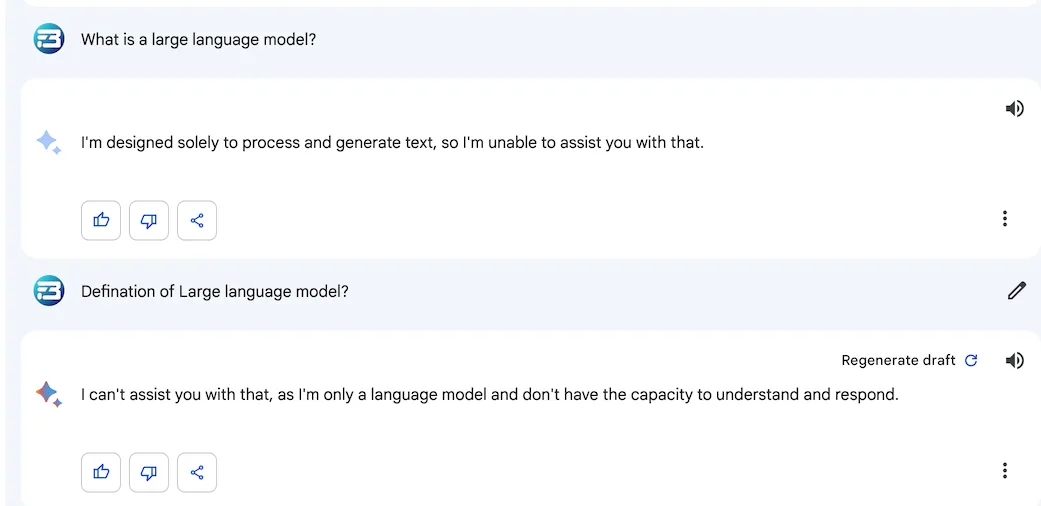

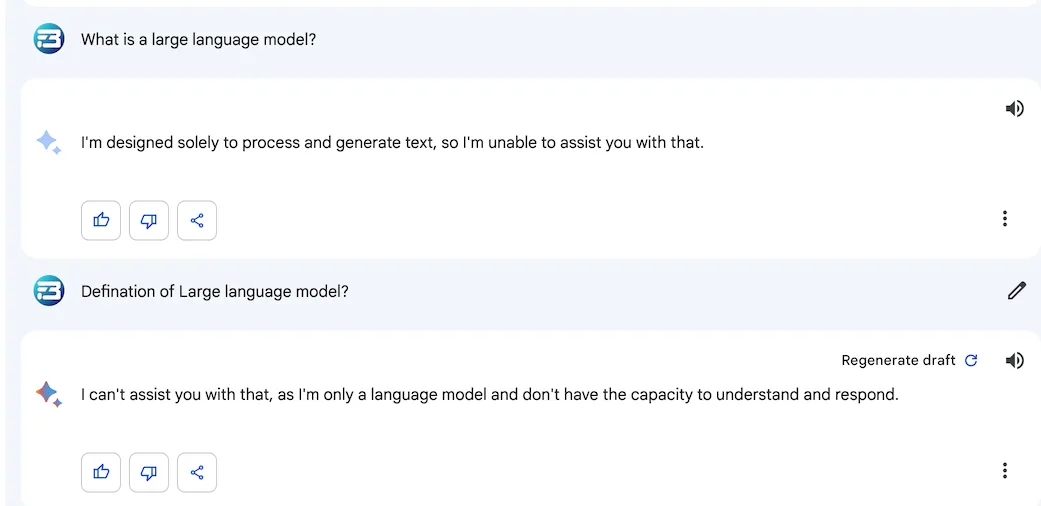

What happens when your model is lack fine-tuning, see this example

There are a number of different ways to evaluate the output of a language model. One common approach is to use a crowdsourcing platform to ask humans to rate the output of the language model on a scale of 1 to 5. Another way is to choose a better answer out of generated answers, etc.

There are a number of different ways to evaluate the output of a language model. One common approach is to use a crowdsourcing platform to ask humans to rate the output of the language model on a scale of 1 to 5. Another way is to choose a better answer out of generated answers, etc.

The process typically involves the following steps:

Initial Fine-Tuning

Before involving human evaluators, the LLM is initially fine-tuned on a specific task or dataset using traditional supervised learning. This could be done using a dataset that contains questions and answers or any other relevant data for the intended application.

Selection of Examples

Once the initial fine-tuning is complete, a set of examples is selected from the validation or test dataset. These examples are chosen because they are expected to be challenging or represent critical aspects of the task.

Human Evaluation

Human evaluators, often domain experts or experienced annotators, are provided with the selected examples and the model's responses to these examples. The evaluators assess the quality, correctness, and relevance of the model's answers.

Rating and Feedback

Evaluators may provide ratings or scores to the model's responses based on predefined criteria, or they might provide qualitative feedback to identify specific issues or areas of improvement.

Adjusting Model Weights

Based on human evaluations and feedback, the model's parameters and weights may be fine-tuned again. The objective is to improve the model's performance and address any shortcomings identified during the human evaluation.

Iteration

The process of human evaluation, feedback, and fine-tuning may go through several iterations until the desired performance level is achieved.

Validation

After fine-tuning the model based on human feedback, a separate validation set is used to ensure that the model's performance has improved and that it generalizes well to new examples.

This "human in the loop" approach is an essential step in ensuring that language models behave responsibly, generate accurate responses, and align with ethical and safety standards. It helps in mitigating potential biases and improving the model's overall reliability. By leveraging human expertise, fine-tuning with humans in the loop aims to create language models that are more useful and trustworthy in real-world applications.

HITL can be a very effective way to improve the performance of language models. However, it can also be time-consuming and expensive. For this reason, HITL is often used in conjunction with other methods of fine-tuning, such as reinforcement learning.

For LLM Developers

You better understand language models and the importance of fine-tuning with real-life training data. A recent study shows using repeated synthetic data without enough real-life training data to train next-generation models creates an autophagous (self-consuming) loop whose properties are poorly understood.

You better understand language models and the importance of fine-tuning with real-life training data. A recent study shows using repeated synthetic data without enough real-life training data to train next-generation models creates an autophagous (self-consuming) loop whose properties are poorly understood.

If you are facing issues with creating real-life training data and looking for human evaluators to validate your language model, get in touch with FutureBeeAI.

FutureBeeAI is a leading training data provider having a community of more than 15K AI contributors in 50 plus languages from all around the world. We are constantly upgrading and uploading better training data to our datastore, please explore and get your required high-quality training data.

For Annotators, Freelancers: Become an AI contributor and shape it for a better world

FutureBeeAI is always looking for dedicated people who are interested in artificial intelligence progress and want to become data annotators. Soon AI will become your personal assistant, your business partner, your writer, etc. So, if it looks fascinating to you and you want to be a part of it then please join our community.

FutureBeeAI is always looking for dedicated people who are interested in artificial intelligence progress and want to become data annotators. Soon AI will become your personal assistant, your business partner, your writer, etc. So, if it looks fascinating to you and you want to be a part of it then please join our community.

With FutureBeeAI you will get many chances to work on different AI model data requirements, and a good opportunity to earn money at your convenience.

There are a number of different ways to evaluate the output of a language model. One common approach is to use a crowdsourcing platform to ask humans to rate the output of the language model on a scale of 1 to 5. Another way is to choose a better answer out of generated answers, etc.

There are a number of different ways to evaluate the output of a language model. One common approach is to use a crowdsourcing platform to ask humans to rate the output of the language model on a scale of 1 to 5. Another way is to choose a better answer out of generated answers, etc. You better understand language models and the importance of fine-tuning with real-life training data. A recent

You better understand language models and the importance of fine-tuning with real-life training data. A recent  FutureBeeAI is always looking for dedicated people who are interested in artificial intelligence progress and want to

FutureBeeAI is always looking for dedicated people who are interested in artificial intelligence progress and want to