Why does MUSHRA require careful listener training?

Audio Testing

Quality Assessment

MUSHRA

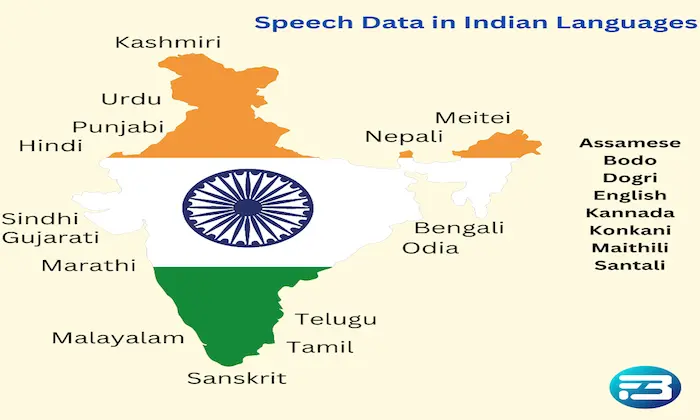

In Text-to-Speech (TTS) evaluation, the MUSHRA (Multi-Stimulus Test with Hidden Reference and Anchor) method is widely used to compare multiple audio samples simultaneously. However, the reliability of MUSHRA results depends heavily on the ability of listeners to accurately perceive and judge subtle audio differences.

Without proper preparation, listeners may overlook important speech characteristics such as prosody, pronunciation accuracy, or emotional tone. For systems evaluated using TTS datasets, well-trained listeners help ensure that evaluation outcomes reflect real perceptual differences rather than inconsistent judgments.

The Challenges of Human Audio Evaluation

Human perception is highly sensitive but also subjective. When listeners compare speech samples, they must identify small variations in rhythm, clarity, voice naturalness, and expressiveness.

Untrained listeners may struggle to distinguish between these nuances. For example, cognitive biases such as the halo effect can influence scoring. If a voice sounds pleasant initially, listeners may rate it highly overall even if certain attributes, such as pronunciation or pacing, are weak.

Proper training helps listeners evaluate speech systematically rather than relying solely on first impressions.

Consequences of Poor Listener Preparation

If listeners are not properly trained, evaluation results may become unreliable. This can lead to incorrect conclusions about model performance.

For instance, a TTS model might appear successful during internal testing but later receive negative user feedback due to robotic delivery or unnatural prosody. In many cases, these issues were present during evaluation but went unnoticed because listeners lacked the experience to identify them.

Key Components of Effective Listener Training

Understanding evaluation attributes: Listeners should clearly understand what qualities they are assessing, including naturalness, intelligibility, prosody, and pronunciation accuracy. Providing examples helps establish consistent interpretation.

Calibration exercises: Training sessions often include listening to a range of audio samples representing different quality levels. This helps align listener expectations before formal evaluations begin.

Structured scoring rubrics: Clear rating guidelines help listeners evaluate each attribute consistently. Rubrics reduce subjective interpretation and encourage systematic assessments.

Performance monitoring and feedback: Regular reviews of listener scoring patterns can reveal inconsistencies or bias. Feedback loops help refine listener judgment and maintain evaluation quality.

Practical Takeaway

Listener training plays a crucial role in the success of MUSHRA-based evaluations. By preparing listeners to identify subtle audio characteristics and apply consistent scoring criteria, AI teams can produce more reliable insights into model performance.

Combining structured listener training with standardized evaluation methods helps ensure that TTS systems are assessed accurately before deployment. Organizations conducting large-scale speech evaluations often rely on specialized frameworks and expert panels, such as those provided by FutureBeeAI, to maintain high evaluation quality and consistency.

Well-trained listeners ultimately help bridge the gap between technical metrics and real-world user perception, ensuring that TTS systems deliver natural and engaging speech experiences.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!