Why should model selection involve native listeners?

Speech AI

Linguistics

Model Selection

In AI model development, particularly for Text-to-Speech (TTS) systems, involving native listeners in the evaluation process is essential. Automated metrics can measure certain technical signals, but they often miss the subtleties of language that determine whether speech feels natural to real users.

Native listeners contribute insights into pronunciation authenticity, cultural context, and realistic speech rhythm. These perceptual factors are critical for building speech systems that users trust and engage with.

Why Native Listeners Matter in TTS Evaluation

Automated evaluation methods such as Mean Opinion Score (MOS) provide useful signals about perceived quality, but they compress complex speech attributes into simplified scores. As a result, they may fail to capture issues that native speakers immediately notice.

Native listeners can detect subtle differences in rhythm, intonation, and emphasis that influence how speech is perceived. For example, a TTS system may pronounce words correctly while still sounding unnatural due to misplaced stress patterns or awkward phrasing.

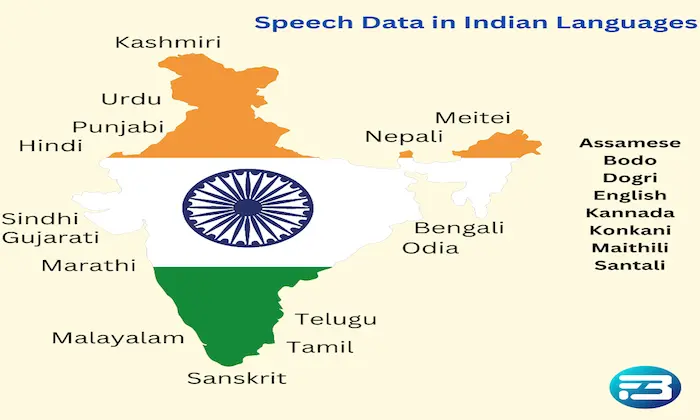

These perceptual cues are particularly important in languages where tone, rhythm, or regional variation influence meaning and emotional tone.

The Risk of Ignoring Native Listener Feedback

Relying exclusively on automated metrics or internal reviewers can create a misleading impression of model readiness.

A system may appear to perform well in controlled testing while still producing speech that feels unnatural to native audiences. Issues such as awkward pauses, unnatural stress patterns, or culturally mismatched expressions may go unnoticed until the system is deployed.

For speech systems intended for global audiences, these problems can significantly affect user engagement and trust.

How Native Listeners Improve Model Evaluation

Detect pronunciation authenticity: Native speakers can identify subtle pronunciation errors that automated systems may overlook, especially when words sound technically correct but unnatural in conversational use.

Evaluate prosody and rhythm: Natural speech depends heavily on stress patterns, pacing, and intonation. Native listeners can determine whether these elements align with how the language is naturally spoken.

Assess cultural and contextual appropriateness: Language is shaped by cultural context. Native evaluators help ensure that speech tone, phrasing, and emotional delivery match real-world expectations.

Identify emotional mismatches: Human listeners can determine whether a voice conveys the intended emotional tone, which is particularly important for user-facing systems such as assistants or customer support interfaces.

Practical Takeaway

Native listeners play a crucial role in ensuring that TTS systems sound natural and culturally appropriate. While automated metrics provide valuable technical insights, they cannot fully evaluate how speech is perceived by real users.

Incorporating native evaluators alongside structured evaluation methodologies helps teams identify perceptual issues early and refine speech systems for real-world deployment.

At FutureBeeAI, evaluation frameworks integrate native listener panels with structured methodologies to ensure speech systems meet both technical and perceptual quality standards. This approach helps organizations deploy TTS solutions that sound natural, trustworthy, and aligned with user expectations.

If you want to strengthen your model evaluation strategy, you can learn more or reach out through the FutureBeeAI contact page.

FAQs

Q. Why are native listeners important for evaluating TTS systems?

A. Native listeners can detect subtle pronunciation issues, unnatural rhythm, and cultural mismatches that automated metrics may overlook. Their feedback helps ensure speech sounds natural to real users.

Q. When should native listeners be involved in the evaluation process?

A. Native evaluators should be involved during pre-production testing, model comparison stages, and ongoing evaluation cycles to ensure speech quality remains aligned with real-world language use.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!