How are listening tasks deployed to evaluators?

Task Deployment

Evaluator Tools

Speech AI

Deploying listening tasks in Text-to-Speech (TTS) evaluation is not just task assignment. It is a structured system that determines how accurately human perception is captured and translated into model decisions. A well-designed deployment process ensures that TTS evaluations are consistent, scalable, and actionable.

Why Listening Task Deployment Matters

Listening tasks are the point where human judgment meets model output. If tasks are poorly designed or misaligned, even the best evaluators will produce unreliable insights.

Accurate deployment ensures:

Evaluators focus on the right attributes

Feedback reflects real-world perception

Results directly inform decisions like ship, refine, or retrain

Step-by-Step Framework for Effective Deployment

Design the Evaluation Workflow: Define the full journey of a task. Specify what evaluators are assessing, under what context, and using which criteria. Align tasks with real use cases such as customer support or narration to ensure relevance.

Select and Train Evaluators: Choose evaluators based on language proficiency and domain expertise. Train them on rubrics and expected quality standards. Regular calibration ensures consistency across evaluators.

Align Task Type with Evaluation Stage: Early-stage evaluation may use quick scoring methods like MOS, while later stages require detailed approaches such as attribute-wise analysis or A/B comparisons to capture subtle differences.

Design Clear and Focused Tasks: Each task should evaluate a specific attribute or decision point. Avoid overloading evaluators with too many criteria in a single task, as this reduces accuracy.

Implement Feedback and Review Loops: Continuously review evaluator outputs to detect inconsistencies or bias. Use this feedback to retrain evaluators and refine task design.

Ensure Metadata and Traceability: Track who evaluated what, under which conditions, and how results were validated. This enables audits, improves transparency, and strengthens decision-making.

Common Mistakes to Avoid

Unclear Instructions: Leads to inconsistent interpretations

Overloaded Tasks: Reduces evaluator focus and accuracy

Lack of Training: Causes variability in scoring

No Monitoring System: Allows errors and drift to go unnoticed

Practical Takeaway

Listening task deployment is not about volume. It is about precision.

A structured system combining clear workflows, trained evaluators, and continuous monitoring ensures that evaluations capture real user perception and drive correct decisions.

Conclusion

Effective deployment of listening tasks transforms evaluation from a basic activity into a strategic system. By aligning task design, evaluator training, and feedback loops, teams can ensure their TTS models are evaluated with depth, consistency, and real-world relevance.

For more insights on building strong evaluation pipelines or leveraging AI data collection, feel free to contact us.

What Else Do People Ask?

Related AI Articles

Browse Matching Datasets

Acquiring high-quality AI datasets has never been easier!!!

Get in touch with our AI data expert now!

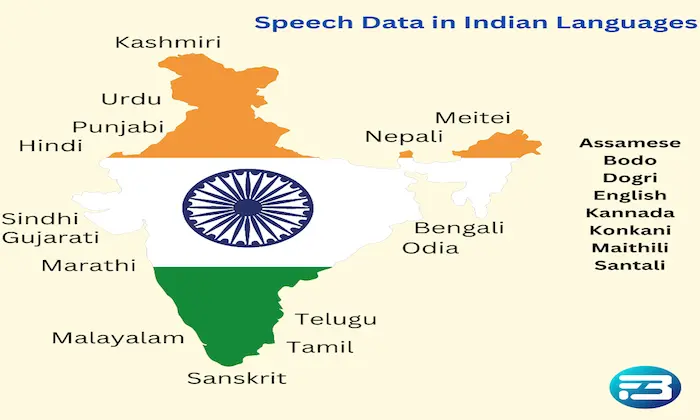

-data-collection/thumbnails/card-thumbnail/top-resources-to-gather-speech-data-for-speech-recognition-model-building.webp)